Adobe Community

Adobe Community

- Home

- Captivate

- Discussions

- Variables not consistently producing accurate clic...

- Variables not consistently producing accurate clic...

Variables not consistently producing accurate click counts

Copy link to clipboard

Copied

Hello,

HELP! We have been using captivate to simulate medical software workflows for 2 years successfully. We have recently ventured into the idea of using projects to test end users workflows (performance based exams). We are using different variables to count correct and incorrect clicks. Each time we run through the simulation we are getting different results (counts). 50% of the time we reach the expected outcome, the other 50% it is incorrect, and out of those wrong outcomes , the same scenario being tested is producing different results. We have checked the advanced actions and variables multiple times for accuracy. we are at a wits end! We are assuming that there is something broken with captivate itself because our logic seems to be flawless. Does anyone have any experience with this type of logic?

Thank you.

Copy link to clipboard

Copied

I have also created a project to track the number of mistakes that a user makes.

Without more detail - this is tough to answer but let me offer a tip.

A troubleshooting trick that I use frequently, is to create a SmartShape to display the variable in question such as

$$myVariable$$.

Place that on the display and start clicking through your project. Watch the variable change for each click where you expect it to and when it does not. If I suspect a variable is not updating the way it is supposed to, this will help to pinpoint where the logic is breaking.

Copy link to clipboard

Copied

Another strategy I would offer is that when I make a project designed to test a very specific sequence - there is typically one pathway I need them to go.

I create a variable called step which will track the number of the current step the learner should be on in the process.

If they click anything other than the correct button I might increment my mistake variable otherwise if they are correct, I will increment my step variable.

So I have lots of logic that checks every step for the conditions of right and wrong.

if (step==1) yada this

if (step==2) yada that

Copy link to clipboard

Copied

Thanks for the tips. We arent using order of steps logic. We just want to make sure they click on each tasks and if not clicked mark it incorrect(mistake). So we have two variables wrongchoice(not clicked) and correct choice. We dont care about the order they perform the clicks in. So we have multiple advanced actions jumping slides.

Copy link to clipboard

Copied

If you are also doing a bunch of slide jumping - you'll want two SmartShapes with your two variables on each slide so you can see them whatever slide you go to. You could just set them to Rest of Project, I suppose.

Again - just watch the variables for whatever choice you make - they should increment accordingly - if they don't - you know the specific click where it broke.

Also - sequence of operations makes a difference in some cases. The logic may look complete but an action that happens before or after another one could be undoing something.

An example I offer is where I might say that if a variable is equal to 0 we show something and make the variable equal to one. but if my very next statement says that if the variable equals 1 to hide something and make the variable equal to 0 - both will have fired and it will look like nothing happened. The reverse would be true as well.

What I am saying is that the logic may look sound when each step is taken in isolation but when partnered with other actions could generate conflict. That is how the troubleshooting boxes can make a big difference in tracking things down.

Copy link to clipboard

Copied

How many advanced actions do you have? Maybe too much? Not tried out shared actions? During consultancy jobs I experiences that they are much lesser choking the project.

Copy link to clipboard

Copied

If you are also doing a bunch of slide jumping - you'll want two SmartShapes with your two variables on each slide so you can see them whatever slide you go to. You could just set them to Rest of Project, I suppose.

Again - just watch the variables for whatever choice you make - they should increment accordingly - if they don't - you know the specific click where it broke.

Also - sequence of operations makes a difference in some cases. The logic may look complete but an action that happens before or after another one could be undoing something.

An example I offer is where I might say that if a variable is equal to 0 we show something and make the variable equal to one. but if my very next statement says that if the variable equals 1 to hide something and make the variable equal to 0 - both will have fired and it will look like nothing happened. The reverse would be true as well.

What I am saying is that the logic may look sound when each step is taken in isolation but when partnered with other actions could generate conflict. That is how the troubleshooting boxes can make a big difference in tracking things down.

Copy link to clipboard

Copied

We’ve been thinking about that, but I’m not sure that applies here. What we’re doing is incrementing a counter as we go along. Once we go back to the TOC (last step), we’re looking to see if several other variables have a value of 0 and if they do, they too are added to the wrong count. So that’s the last action and it’s not undoing anything – it’s simply looking at a couple of other variables.

This doesn’t explain why this would work if at least one of the clicks is on an object that’s associated with a variable that we’re examining to see if it’s got a value of 0. If we were examining only non-0 variables, this would all work.

I can’t think of how this could be done in any other way, because you can’t examine those “0” variables until the end of the operation – the user has to have completed the entire workflow.

We ran a few test cases yesterday in different sequence with the same variables being clicked/not clicked in different order. Then we threw in a distraction object being clicked. The pattern we are seeing is that when an obsolete object is incorrectly selected, thats the only time we are getting accurate results.

Copy link to clipboard

Copied

I think you are going to need to explain/show your logic in more detail.

In my experience, incrementing variables can result in the issue you are describing. Anytime there is a click you would need to disable each option (correct/incorrect) on the slide. Otherwise they can click either more than once. When you are checking your results, see if the total of incorrect and correct is more than the number of interactions you are evaluating.

Copy link to clipboard

Copied

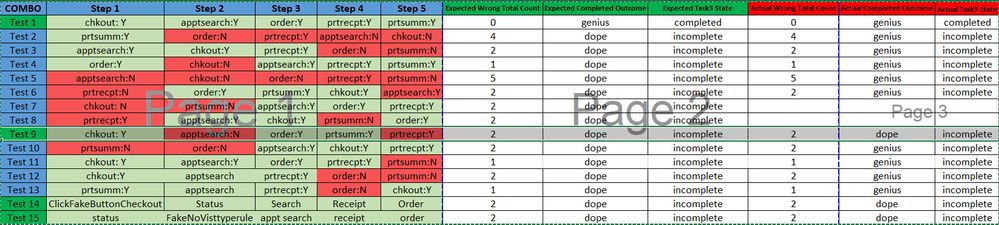

The count for correct/incorrect clicks seems to be accurate, but the complete/incomplete task result is not accurate. Here is an example of the test cases we ran yesterday, only the .... the combo in green were the only accurate outcomes (task3 state).

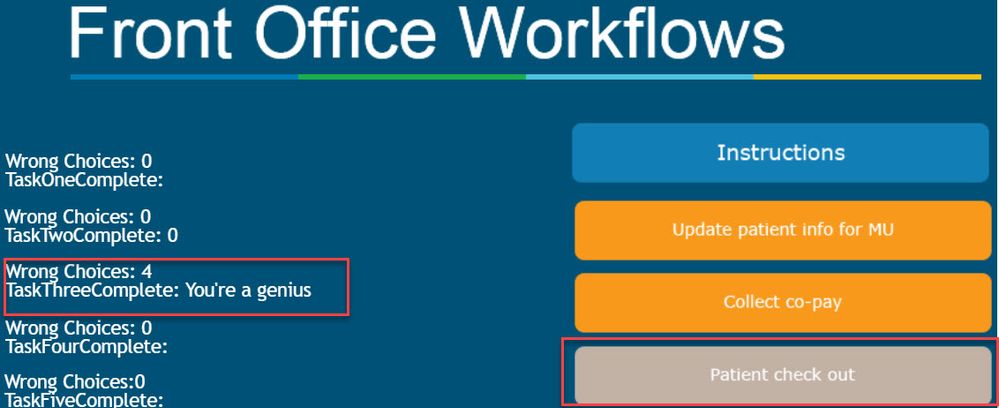

Here is the what the variables looks like for Test 2. The count of wrong choices is correct, the state of task 3 in correct, however, the variable on the task 3 completiong is incorrect. It should say ' You're a dope' (this is just a sample, we do not call out end users dopes;) .

Copy link to clipboard

Copied

The issue seems to be with counting variables= 0. It only produces the correct result if the fake click incrementing variables=1. We need to count missed clicks = 0 as well, and have the outcome dependant on that incremented value regardless of false click results.