Adobe Community

Adobe Community

Copy link to clipboard

Copied

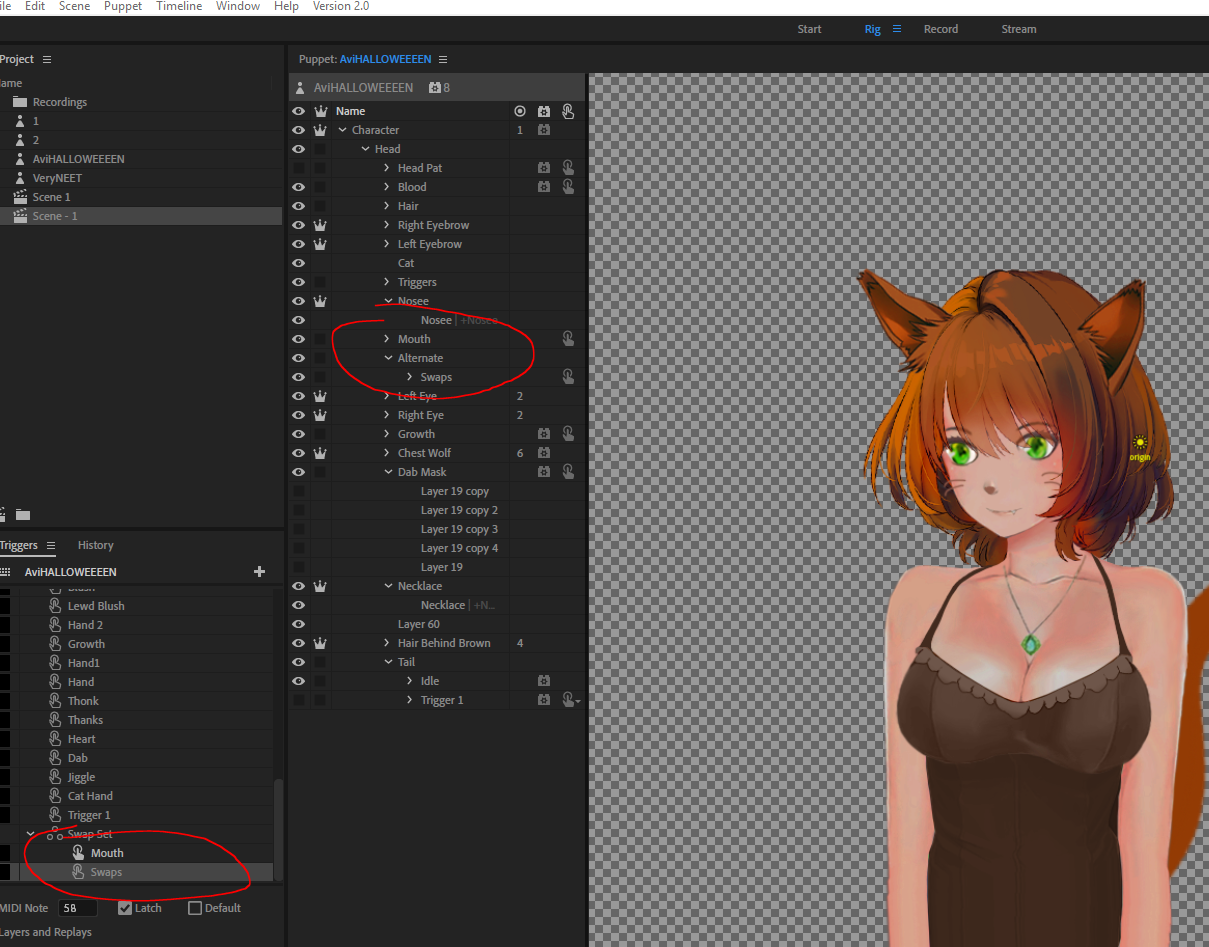

hi, I'm having issues trying to swap between different types of mouths.

Here's what my nesting currently looks like

and here is a short gif of what happens(the swapped in mouth is just blank and no mouth appears)

Screen capture - bf5027d6a5b87c024219ed46dc3c2d48 - Gyazo

thanks

1 Correct answer

1 Correct answer

As I thought about this more I figured I might as well attach it. This is a pure demonstration, the upside down mouth is purely to illustrate a swap set arrangement for 2 mouth sets that seems to work.

DT

Copy link to clipboard

Copied

Weird, your basic setup seems right. I did something analogous with Chloe and it seems to work.

I'm not sure from the picture what is different yet. Are there any other triggers that might be hiding the mouths?

DT

Copy link to clipboard

Copied

What I personally do (and I tend to just repeat the same thing once I get it to work - there are probably other ways that work equally well) is create a layer “Mouth Group” (which auto tags it with Mouth Group), with a subgroup for “Mouth” containing the standard visemes. I then put the non-standard mouth positions as siblings of Mouth (under Mouth Group). I then create a swap set for Mouth Group where Mouth is the default. So keyboard triggers etc swap out the Mouth and replace it with one of the other mouths (angry, grin, etc). But that is where the angry mouth just has one position (not lots of visemes). (I just like always putting all children for a swapset under one parent for simplicity and consistency.). If I want triggers for “smile” etc (standard visemes) I create a second swapset on the Mouth layer (With Neutral is the default).

However if you want multiple mouths each with visemes (e.g. a happy set of mouth positions and an angry set of mouth positions) you might need to add multiple Face behaviors to connect up to the different mouths. Chole you will notice has behaviors added on both Mouth layers. I think this is the difference.

To check, open up the Face behavior then look under “Handles”, and the last group is “Mouth”. If this has not bound to all the mouths, then I suspect you will need to add multiple behaviors to control each set of visemes.

That’s my guess anyway.

Copy link to clipboard

Copied

In my case since I didn't want to draw a second set of visemes but wanted to clearly tell the difference between the two, I made her mouth sharable, dragged in another instance of it, and turned it upside down (so the tongue looks wrong, but mostly it looks like a "sad" mouth set). I didn't need a second behavior and both sets work. I think the same would have been true if I'd done the duplication and flipping in the PSD instead of via a shareable group in Ch.

DT

Copy link to clipboard

Copied

Dan: FUNKY!!!!

In case useful, Episode 22 of the "Project Wookie" series of YouTube live streams I am doing talks about how I normally do mouths and swap sets. You can skip forward 5:20 to get to swspsets. (The full playlist is here.)

Copy link to clipboard

Copied

Copy link to clipboard

Copied

Hi Dan,

Thanks so much! I was busy with comic con this whole weekend and finally got a chance to sit down to work on this.

Within 5 minutes of seeing your example I was able to set it up for my puppet flawlessly.

I have a ton of other questions, but will make separate threads for proper documentation. Here's how the final product came out:

Screen capture - 73fe5e03fc1e514bf9a82e786837448b - Gyazo

before, I had had two triggers to activate for the resting and talking position, but it was getting too in the way for my live stream

thanks again!

Copy link to clipboard

Copied

Awesome! Glad I could help. ![]()

I'll try to keep an eye out for other questions. Feel free to ping me directly as well, especially if a post seems to be going unnoticed.

For live streaming, I think you'll have a lot of fun with Replays in the 2.0 release if you haven't already started experimenting. Definitely a big help for making the puppeteering less manual for common gestures/poses/movements.

DT

Copy link to clipboard

Copied

Dan to the rescue again! 😉

Funny (sad?) thing was last night I had a character that I wanted to sit in the corner and talk over the top of a video for 20 minutes (I recorded the audio track, did the lipsync computation, all good) but it was going to take like 4 hours for AME to complete the encoding. So I live-streamed it in CH via OBS and completed it in 20 minutes. Same CH, same video length, but livestream allowed me to bound the time it would take to complete. The animation quality is no doubt much less, but its just a little character sitting in the corner of the screen. It did not need to be high quality.

It was just sad that it felt like livestreaming was quicker and easier than rendering exactly the same content via AME. I am sure I could have got it faster by reducing resolution, frame rates, etc - but it was interesting to watch myself not bother and livestream it instead. I did not know how much time I would save with each setting so I did not want to experiment. Then there was CUD, OpenGL, software options - I had no idea the difference or which would be fastest. I almost want a “here is my time budget - work out the best settings within that time period”. Or have a “render preview mode” option...