Adobe Community

Adobe Community

- Home

- Lightroom Classic

- Discussions

- Lightroom Classic won't show new GPU installed

- Lightroom Classic won't show new GPU installed

Lightroom Classic won't show new GPU installed

Copy link to clipboard

Copied

Hello,

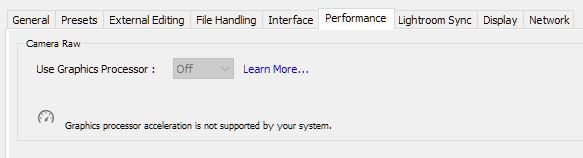

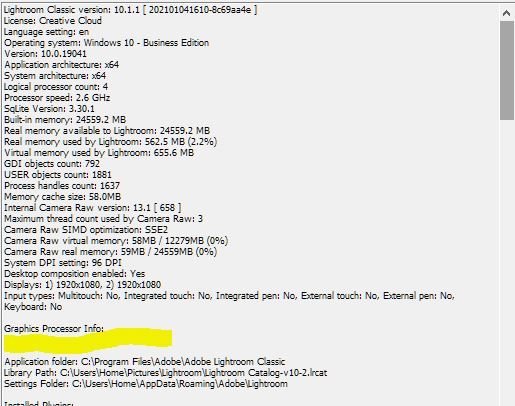

I have an older HP z400 that I am trying to run Lightroom Classic, Bridge, Raw, and Photoshop on. My original system only had a 1GB GPU, so I upgraded to an Nvidia Quadro 4000 2Gb which should just meet the minimum GPU spec from Adobe. I also have 24 GB of ram and meet all other minimum requirement on the adobe website. However when I go to Edit/preference/performance tab, Graphics processor is grayed out, and it still displays "Graphics processor is not supported by system". This is the same message in Bridge, and Photoshop/raw settings too. I also did a clean/new install of the Nvidia drivers for my GPU. I am also running windows 10 latest version.

So either my new GPU still doesnt meet the minimum requirments, or I am missing something to get Lightroom to see the new GPU. I trired re-installing the entire adobe suite of software I have, still no luck.

Any suggestions to get Lightroom to see my GPU, or figure out what component of my system is the current road block?

Copy link to clipboard

Copied

The Nvidia Quadro 4000 is a ten-year-old graphics card, so its unsurprising that LR may not support it.

Your displays are 1980 x 1080, and a GPU usually has little impact on performance on displays smaller than 4K (and sometimes performance suffers).

[Use the blue reply button under the first post to ensure replies sort properly.]

Copy link to clipboard

Copied

So oddly, it actully shows up in Photoshop as being used, just not the Camera Raw component of photoshop. I know it is an old graphic card but it should work based on the spec, which makes me think there as setting I missed somewhere. Dedicating my resources to camera equipment at the moment so was tyring make this old PC function last a bit longer. Unfortunately adobe just grays out the camera raw graphics processor but doesnt tell you for sure if its a hardware,driver, or other issue that is preventing you from using it.

Copy link to clipboard

Copied

The spec for graphics cards in use with Lightroom Classic is more than just 2GB of video memory. https://helpx.adobe.com/ca/lightroom-classic/kb/lightroom-gpu-faq.html

I'm pretty sure a 10 year old GPU will not meet those requirements.

And pay attention to what @johnrellis said ... you do not need any particular GPU with a 1920x1080 monitor, as the GPU will have virtually no effect anyway. So, whatever problem you are trying to solve with this new GPU cannot be solved by a GPU anyway.

Copy link to clipboard

Copied

Thanks for the help. I realize it may not do much but wanted to give it a try. I have a hunch, even though the Nvidia driver says it supports Directx 12, and my PC has Directx 12, when I look at my GPU in the task manager is still lists it as Directx 11. So that would make it not compatable with Lightroom. Looking further into the driver release notes, that driver addes support for Directx 12 to a wide range of model numbers, but not for my specific quadro 4000, so that is probably what is making it not compatible.

Copy link to clipboard

Copied

I realize it may not do much but wanted to give it a try.

It will make no noticeable improvement.

What problem are you trying to solve?

Copy link to clipboard

Copied

It was my understanding certain tasks like preview/thumbnail generation for example in Camera Raw could use the GPU. right now it hammers my CPU to 100% when i do certain tasks in Camera Raw

Copy link to clipboard

Copied

Previews creation does not use the GPU.

Previews are handled by the CPU, and since you are asking the CPU to do a lot of work (depending on how many previews you ask it to create and how large the previews are), CPU going to 100% is expected.

I thought we were talking about Lightroom Classic, but you have switched to talking about using Camera Raw, why?