Adobe Community

Adobe Community

- Home

- Premiere Pro

- Discussions

- Interactive live feed/source in PPro or After FX?

- Interactive live feed/source in PPro or After FX?

Interactive live feed/source in PPro or After FX?

Copy link to clipboard

Copied

This might be a bit crazy but I've got an idea for a project I want to create. Several years ago, I saw this interactive experience at Cadbury's World, where you could step in front of a wall, and virtual bubbles would be floating down on the wall (I think it may have been a projector from behind the screen showing them). What was cool was that you could interact with the bubbles live. Your silouhette was projected onto the wall too and if you held out your hand your silouhette could catch these virtual bubbles on the wall, or push them about.

I'd really like to try and do something similar where I can capture a live source, add in some interactive elements, and project the live result it onto a wall to give a similar effect although I might make the elements interact differently. Couple of seconds lag would be fine though! I don't need to stream it across a network, just show the result on an attached display device - monitor / projector.

I've got this nagging feeling at the back of my mind that Creative Cloud is just not built for this. But at the same time I feel that if I could just get a live source into Premiere Pro or After FX, I could make this work somehow. I'm fairly fluent in both and comfortable writing scripts in After FX.

I have a Canon 5D IV that I was hoping to use as the capture device in PPro but from looking at some posts, it looks like this can't be used as PPro capture requires a firewire device? -

- https://community.adobe.com/t5/premiere-pro/live-video-capture/m-p/11744323

- https://community.adobe.com/t5/premiere-pro/capturing-live-video-from-canon-5d-mark-ii-onto-premiere...

I've got a Dell Precision 3640 Tower with an Nvidia Quadro P2200 which should be powerful enough to live edit a FHD stream I should think. I've got access to a projector although can test the idea just on screen of course.

So a few questions:

- Am I going about this completely the wrong way? Is this completely unfeasible in Adobe CC software?

- Is it possible to get a live stream into After Effects? (feel this is better then PPro to add in interactive elements)

- Is it at all possible to get my 5D iv working as a capture source in PPro? or another webcam?

- If I can get a capture source working, is it possible to add any interactive elements in PPro, or is the capture feature just to record some video and not actually edit it live?

- I wondered if I really need a 3d camera like a Kinect camera to be able to make this work? And if I should be approaching this in completely different software?

- Had a look at OBS Studio which I use a bit but not sure that's got the capabilities that I need.

I'm probably way out of my depth with this and like I say I might be approaching this from totally the wrong angle. But please don't shoot me down, I'll really appreciate any ideas or thoughts anyone can throw into this.

EDIT:

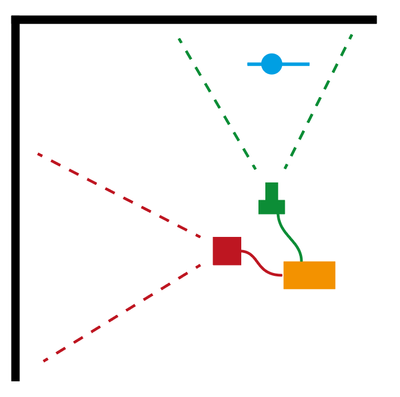

I thought I'd add an illustration for clarity. The way I see it working is that a webcam / capture source (green) provides a live stream of a person (blue) against a plain wall (black). The stream is fed to my PC (orange) which adds in the interactive elements live and outputs it to a display such as a projector (red).

Against a well lit white wall, I could whack the contrast right up so highlights become completely white, and anything else is black which should siloughette the person reasonably well. I could create a bubble in After Effects and use sampleimage() to test if the background is white or black, and adjust the motion of the bubble accordingly. I know that's very simplified and there's a lot more code to add for the motion, speed, gravity, bubble generation etc but I can see a way to making it work.

Regards, aTomician

Copy link to clipboard

Copied

Nice brainstorming going on here 5D. While you can edit growing files in Premiere Pro, it is not really suited for live interaction and simultaneous output, nor is After Effects. The closest tool that supports live video streaming is Character Animator, but even that doesn't bring to mind a current workflow. Sorry.

My approach would be to use a hardware/software combo that is built for live video performance, though. My mind was blown back in the early 2000s to what VJs were doing in Tokyo. I became pretty decent at Motion Dive at the time. I would look to that as inspiration if were in your boots. Have you looked into various VJ software? It's very intense these days.

Regards,

Kevin

Copy link to clipboard

Copied

Thanks! That's really helpful - kind of confirmed what I thought about CC not being suited to this. Hadn't occured to me to look at VJ software, but I'm now installing Resolume Arena to see what I can do with that 🙂 it looks like it also has some level of integration with Adobe CC as it asked me if I wanted to install plugins for AFX and PPro. So this should be fun!

Pity that we can't pull in a live input into PPro or AFX, so that all the tools we know can be used on a live source. But I get that these programs aren't really designed with that in mind.

Regards, aTomician

Copy link to clipboard

Copied

Sure, 5Diraptor. Let's stay engaged as I'd love to help you figure in CC DVA apps into your workflow if at all possible. I would really look into Character Animator as some kind of conduit to draw material from the othter apps into it. Dynamic Link, while not perfect, does let these apps interact realtively real time. I will forward your thread to some folks I know in the research arm to see if I can draw any feedback from them. No promises on what I can do, but I can at least try.

Cheers,

Kevin

Copy link to clipboard

Copied

@Kevin-Monahan thanks for your interest and advice. So far it looks like Resolume can do what I want but that it's going to be complex and involve some coding which will be a learning curve but keen to see what I can achieve.

I've installed Character Animator as well as I haven't touched it before and planning to mess about with it a bit and see what I can do with this. Looks possible with live input and interactive objects functionality out of the box.

Regards, aTomician

Copy link to clipboard

Copied

I really like this use-case, 5Diraptor! I'd love to follow-up with you on some of the specific requirements ... there may be some things we could help with, and even if not, I'd like to understand where some of the gaps might be with our current tools. I work in Adobe's research division, so we're always looking for opportunities to expand the capabilities of CC. If you're interested in chatting more, feel free to reach out to me at wilmotli@adobe.com.