Adobe Community

Adobe Community

- Home

- Premiere Pro

- Discussions

- PP stutter Playback RED Footage over Network with ...

- PP stutter Playback RED Footage over Network with ...

PP stutter Playback RED Footage over Network with CUDA

Copy link to clipboard

Copied

Dear Adobe Community,

we have a problem in our company with the playback of RED material. In the following I describe the behavior of Premiere Pro when playing back 8K r3d data. But this also affects footage from 5/6K.

First of all about hardware and infrastructure. We have a server with a raid controller (Adaptec 81605zq) which provides 16x4TB Samsung Evo 860 in RAID6. This is connected to the switch by means of 4x10GbE bundled over fiber optics. From here our workstations are connected via 10GbE copper cable. The server runs with MS Server 2016, the workstations with Win 10 Pro x64. All workstations show the same problem. No matter if Intel, AMD, 9900K or 3990X. No matter if network over copper or glass. No matter if network cards from Intel or Aquantia. In the appendix are tests of our workstation with AMD Threadripper 3990X, 256GB Ram as well as RTX2070 and 10GbE network. In general everything runs in a network with 4mb jumboframes.

Now to the problem. Let's have a look at RED Footage in 8K in Premiere Pro (Timeline or Preview), the playback jerks in 1/1, 1/2, 1/4, and sometimes even 1/8. We thought it was because of the network. But in the copy test from RAID to a RAM drive in the workstation we had reached 1000mb/s throughout. Also the access latencies had extremely good values.

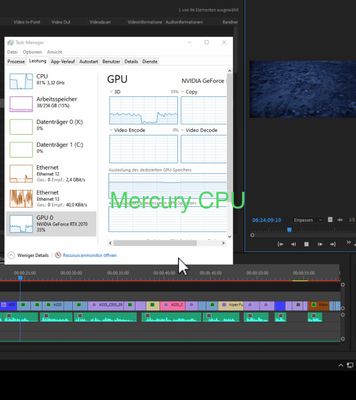

By chance we had the Mercury Playback Engine set to CPU on a workstation. Here we had no problems until 1/2. The scrubbing in the timeline was much smoother. Noticeable in the taskmanager was the much higher network load. On Mercury GPU we had between 0.9-1.6 Gbit/s (CPUs with a higher clock rate reached higher transfer rates. A 9900K was faster than a 2990X) while on Mercury CPU it was up to 4.5Gbit/s.

It is also interesting that under Mercury CPU the GPU has a higher load than under Mercury GPU?!?!

We have therefore continued testing. We tested a GTX 980Ti, 1080Ti and 2080Ti. Always the same result. But if the footage is located on a SSD we did not have this problem. We then rented an AMD Vega 54. Mercury GPU (OpenCL) also delivered 4.5Gbit/s and smooth playback.

We contacted NVIDIA and one of the engineers said that CUDA was vulnerable to PCIe underflow caused by the network card. Unfortunately we did not get anywhere. RED also told us that only Adobe could do something about it. So now I turn to Adobe and ask what could be the source of the problem?

We bought hardware from server over network to workstation worth >100.000€ and we don't have a good working experience (with NVIDIA). We would be happy about help.

The problem exists at least until Adobe Premiere CC 2018 and is also present in the last update (17.5). It concerns only RED material. ProRes etc are also loaded under GPU acceleration with 4.5Gbit/s through the network. As a workaround we have been using CPU acceleration for editing for two years now, but especially when lumetry color correction has been added, you can only work with GPU acceleration...

Thanks a lot.

Copy link to clipboard

Copied

Copy link to clipboard

Copied

Copy link to clipboard

Copied

Hey,

I received the post directly from

iPhone and used the Upload Media button to upload the screenshots from the smartphone. The screenshots are only used as evidence, but are not relevant in the flow. Is there a possibility to change the contribution? Unfortunately I can't find a button. Thanks!

Copy link to clipboard

Copied

Many users have noted dramatic improvements in RED in the last release ... that would be 14.5 I think. If you are on that, and still having these troubles, please go to their UserVoice site and post the beautifully detailed information you listed above.

This site is primarily user-to-user with some product support staff oversight. That site is the direct portal to their engineering team. Every filed post is read & logged by at least one engineer, and it's one of their main paths to getting data on stubborn bugs & performance issues.

Neil

Copy link to clipboard

Copied

Thank you. I contaced the support today and it is forwarded to a more specialised person. I released a ticket in the uservoice anyway. I forgotten that its not only for After Effects where I use it realy often. Thanks!

Copy link to clipboard

Copied

For reference, and for anyone else encountering this issue, it has been raised with our engineering team - our reference is DVAAP-864.

If anyone would like to know the status, please log a support case, or contact your account manager for further information.

Copy link to clipboard

Copied

I have seen SSD drives in RAID configurations perform very spuratic.