Copy link to clipboard

Copied

When we initiate a Nessus scan on our server, the ColdFusion Application Server consumes 80-90% of the CPU and continues to do so even after the scan is terminated. Only restarting the service brings the usage back to normal, but starting the scan repeats the high CPU usage. No errors are thrown, just high CPU.

IIS 7, Windows Server 2008 R2 SP1, ColdFusion 2016.0.04.302561

Any ideas where to start troubleshooting?

1 Correct answer

1 Correct answer

Hi,

Could you please try this step and then run the scanner once and share the details.

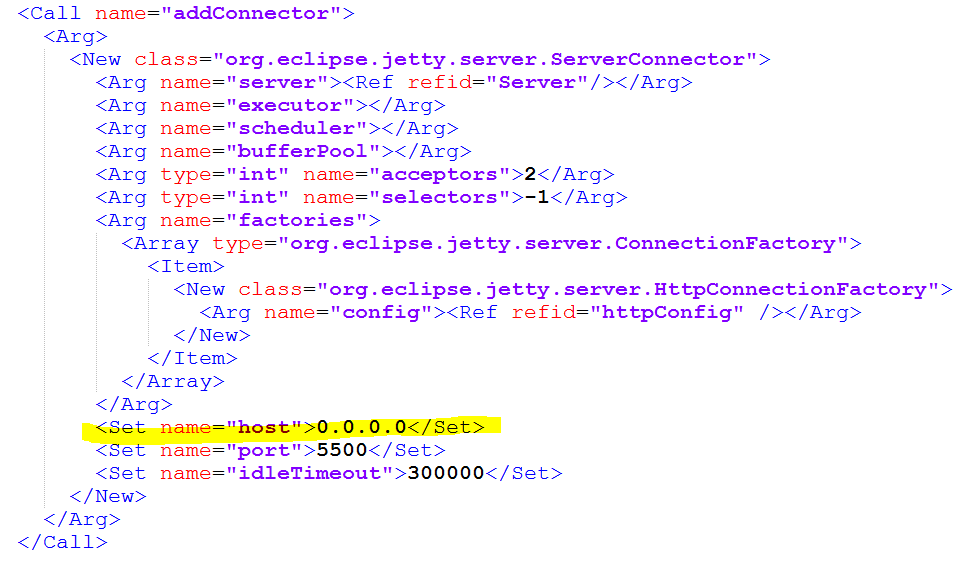

Go to \ColdFusion2016\cfusion\lib\ open "jetty.xml" and search for port "5500". Host shows "0.0.0.0", please replace it with 127.0.0.1. Save the changes and restart ColdFusions service.

Thanks,

Priyank Shrivastava

Copy link to clipboard

Copied

Hi,

Could you please try this step and then run the scanner once and share the details.

Go to \ColdFusion2016\cfusion\lib\ open "jetty.xml" and search for port "5500". Host shows "0.0.0.0", please replace it with 127.0.0.1. Save the changes and restart ColdFusions service.

Thanks,

Priyank Shrivastava

Priyank Shrivastava

Copy link to clipboard

Copied

Thanks,

Will do, but will need to wait for our maintenance window to restart the service. I'll report back when we can.

Copy link to clipboard

Copied

Having the same issue. CPU getting maxed out and won't release after scans unless services are restarted. Was this ever solved??

Copy link to clipboard

Copied

We are also experiencing same issue with cf 2016 after nessus scan... but CPU typically rises from 3-5% to 40% + ... And each instance of cf rises to 40%, so if you have 2 instances on the same box we see 80% cpu that will not fall until restart cf...

any help appreciated.

-Gabe

Copy link to clipboard

Copied

I'm not as surprised by a spike in processing by Nessus scans, or any other security scans. And I would not think it's a "ColdFusion problem" when I see it happening. Let me explain.

First of all, I find that such scans (just like a rash of visits from a search spider) often come with high intensity (many requests per second). Your server may have other traffic at the same time, so this added traffic is itself a burden. While many servers and CF instances are configured well to handle such a bump in traffic alone, others are not and could suffer for any number of reasons. (More in a moment.)

Second, such security scans like Nessus are generally trying to detect vulnerabilities, right? And they may be sending in requests that trigger errors. Why is that an issue? Well, if you have error handling in place to detect their naughtiness, you may be doing some pretty heavy work on each failure.

I've seen people who have their error handlers send a cfmail on each error, with a dump of all variable scopes. That's ok if it happens once an hour, or once a minute. But a few times a second? That alone could be your bottleneck (increasing CPU time, or increasing memory use, causing GC's, increasing CPU time). Yep, just all due to a lowly CFDUMP.

Third, that rash of visits (from a scanner or a spider) will generally create a new session for each page that they hit. Again, that alone may be no big deal. But let's say they visit 1,000 pages, in a minute. That's an increase in 1000 sessions in that minute. Will that be a big deal?

Well, it spends on a) what your code puts in the session, and b) how long sessions are set to last. And this is not necessarily about the page being called, but the application.cfm or cfc being called on BEHALF of that page requested. Do you have an onsessionstart (or some code that tests if no session yet exists) where you perhaps load up some CFCs, or queries, or arrays, on behalf of your "user"? Again, that's fine for real users, but for automated requests, they will never use that info beyond that first page, because their next request will create a new session, and on and on.

This is a frequent cause for LOTS of heap use, and again the longer sessions last, the more heap they use (and then eventually the more GCs that happen, or you may well run out of memory.)

I covered this subject of the impact of spiders and other automated tools in a session at recent conferences:

http://carehart.org/presentations/#spiders

Bottom line, those experiencing this issue would do well to have any kind of monitoring tool at all on their CF server, so you could know what's happening (instead of just wondering "what's wrong"). If you have CF Enterprise, you have the CF Enterprise Server Monitor (Which can show you many things without negative impact--do not turn on the "Start Memory Tracking" feature, as that may itself kill your instance.) If you're on CF Standard (or Enterprise, or Dev or trial, or Lucee or BlueDragon), you can use FusionReactor or SeeFusion.

As for what you may find, that's too much to anticipate in this reply, but I hope the info above is enough to get some of you started. you need not feel as lost as you do, and despite Priyank's note above, my experience is that the answer is within not something within CF itself--nor some inherent "problem of CF and Nessus scans", but rather just another manifestation of quite common problems that can hit any CF instance (and indeed any kind of app server, when the pages being called are dynamic vs static, and perhaps exacerbated in this case by error handling and session handling steps that may be more common in CF apps than others.)

Finally, if any of you are still stuck trying to solve the problem, I suspect I could solve it in less than an hour together in an online session (via screensharing, not remote desktop, so no need for you to open any firewall holes to let me in.) For more info, see http://www.carehart.org/consulting. Sorry for the sales pitch, but I'm just saying you don't need to feel alone, if you need more help interpreting what you find above without a lot of time and back and forth here (or indeed just want someone to "find the issue and fix it").

/Charlie (troubleshooter, carehart. org)

Copy link to clipboard

Copied

So making the change from "0.0.0.0", to 127.0.0.1. in the jetty.xml file worked! Nessus scans do not tie up the CPU when you makes this change. I tested several times both with the change and without to make sure... but what are the implications of making this change? Can this break anything?

thanks!

Gabe

Copy link to clipboard

Copied

Hi, Gabriel. I will say I'm glad to hear that you solved it, but I wish you had said you had not yet tried it. Since your note was from yesterday and Priyank's mention of that config file was from June, I assumed you were implying that it had NOT worked for you, which is why I shared the rest. 🙂

But I'm with you: I'd love to hear from Priyank what the implication of this change is, especially with regard to why the change had such dramatic impact, but then as you ask also if there's any other implication for having changed it.

I will confirm (to save someone else the bother of pointing it out) that the jetty docs do clarify that this host value controls whether the jetty web server listens on all network interfaces or not (it does, if set to 0.0.0.0, of if the host is not set at all). For example, see What to Configure in Jetty . But it doesn't really help me see why that would lead to the high CPU in CF.

And for those perhaps not aware, this jetty (and jetty.xml) we're referring to here is NOT the same jetty which underlies the "CF Add-on service", which supports the Solr and CFHTMLtoPDF capabilities. That's a jetty that runs as its own service (and has its own jetty.xml, in C:\ColdFusion2016\cfusion\jetty\etc\jetty.xml, for instance).

Instead, this jetty referred to here is one that underlies the optional "server monitor" web service, which can be enabled if one (using CF Enterprise) turns it on with the Server Monitor>Monitoring Settings page. It creates an alternative web server for accessing the CF Server Monitor.

Sadly, I have seen this jetty and its port and processing cause other conflicts even for CF Standard (which does not have the Server monitor, and so can't use this "monitoring server"). And perhaps the conflicts I've seen could have been solved by this matter of the host being set to 0.0.0.0. I just didn't connect that dot at the time.

But I hope someone from Adobe will chime in with a bit more. (And hope all this discussion may help other readers who find this in the future.)

/Charlie (troubleshooter, carehart. org)

Copy link to clipboard

Copied

Thank you Charlie for your suggestions... when I first read about changing the jetty file i thought that was to help diagnose the problem and i didn't have a hope that it would fix the problem so i didn't try... but out of desperation i tried it and was surprised

Copy link to clipboard

Copied

I just had a customer experience the same issue. All of their dev and test CF servers spiked at the same time during the early morning hours. The CPU stayed at 30-40%. It seems the scan does a port scan then jams each combination with hundreds of bad http requests.

It appears some do make it in and mess up the code listening on the socket.

My question is if all Connectors should default to 127.0.0.1? You also have 1 or 2 connectors in the server.xml as well. It would save customers who do these scans an extra step for every server.

Mike Collins