Accented characters exported in a .csv file

I wrote a script to merge the data of different form in a cvs file attached to a document.

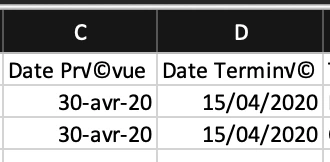

Everything works fine, execpt for the accented characters which don't appear correctly in the .csv file.

For exporting the data, I use the util.streamFromString with utf-8 setting.

I tryed all other setting but no one is correct.

Is there a way to export correctly the accented characters?

FYI, merging data with the Acrobat tool works fine.

Thanks for your answer.