Adobe Community

Adobe Community

- Home

- Character Animator (Beta)

- Discussions

- Re: Feature Focus: Body Tracker

- Re: Feature Focus: Body Tracker

Feature Focus: Body Tracker

Copy link to clipboard

Copied

Live-perform character animation using your body. Powered by Adobe Sensei, Body Tracker automatically detects human body movement using a web cam and applies it to your character in real time to create animation. For example, you can track your arms, torso, and legs automatically.

Get started now with an example puppet

- Download the Hopscotch_Body Tracking puppet.

- Open Character Animator (Beta), choose File > Import, and then select the downloaded .puppet file.

- Click the Add to New Scene button at the bottom of the Project panel to create a scene for the puppet.

- In the Camera & Microphone panel, make sure the Camera Input button (

) and Body Tracker Input button (

) are both turned on. If off (gray), click it to enable that input.

- Now try moving your body, waving your arms, and if you’re far enough from the camera to see your lower body, raising your feet. Body tracking is that simple!

Additional example puppets are available for you to try out.

Control body tracking

Puppets that respond to body tracking use the Body behavior. When a puppet track is selected in the Timeline panel, the Properties panel shows the Body behavior (among others) that is applied to the puppet. It is this behavior that allow you to customize how the Body Tracker works. For example:

- Set When Tracking Stops to how you want the tracked parts of your puppet’s body to move when your body can’t be tracked for some reason, such as if your legs or arms move out of view from the camera. Hold in Place keeps the tracked handles where they last were before tracking was lost. The Return to Rest and Snap to Rest options, instead, will move the handles to the default state of the artwork, with the former option allowing you to control how fast it moves back with the Return Speed value, whereas the latter option moves back immediately.

- Select the specific Tracked Handles on your puppet that you want to be controlled by your body movement. By default, just the wrists are tracked.

When you want to capture your body tracking performance to later export to a movie file or use in an After Effects or Premiere Pro project, you’ll want to record the performance (Help documentation on recording in Character Animator).

Set up your own puppet for body tracking

The Body behavior lets you control your puppet’s arms, legs, and torso by moving your body in front of your web cam. When you use the Body behavior, be sure to also have the Face behavior applied to control the position of the character and to track your facial expressions. You can also use the Limb IK behavior to prevent limb stretching, inconsistent arm bending, and foot sliding.

Setup

After importing your artwork into Character Animator, do the following steps to rig the puppet so that the Body Tracker can control it:

- Open the puppet in Rig mode.

- In the Properties panel’s Behaviors section, click “+”, and then add the Body behavior.

- Create tagged handles for the different parts of the body’s artwork so that the Body behavior can control their movements:

- Arms: Move the origin handle to the shoulder area from where the arm should rotate; but do not tag it as “Shoulder” directly on the arm. You’ll want to tag your shoulders and hips one level higher in your puppet hierarchy – commonly the Body folder or the view folder (e.g., Frontal) – to keep your limbs attached during body tracking.

Now, add handles at the locations of the elbow and wrist and apply the Left/Right Elbow and Left/Right Wrist tags to the handles. - Legs: Like the arms, move the origin handle of the leg to the hip area, but do not tag it as “Hip” directly on the leg. Add tagged handles for Left Knee, Left Heel, and Left Toe to the left leg and foot, and the Right Knee, Right Heel, and Right Toe tags to the handles on the right leg and foot.

- Shoulders & Hips: The Shoulder and Hip handles should be on the body, not on the limbs. Select the parent group containing your limbs and add handles in the same shoulder and hip positions as the arm and leg origin handles. You’ll see green dots from the limbs where you can place them, or you can copy/paste the handles directly from the limbs to position them exactly. It should work fine either way. Tag these handles as Right Shoulder, Left Shoulder, Right Hip, and Left Hip.

Note: Body and Walk behaviors on the same puppet might cause issues because both want to control the same handles. If you do have both behaviors applied, consider turning off the Body behavior when animating walk cycles, and turning off Walk when doing general body animation. The Body behavior should work fine in side and 3/4 views.

Controls

This behavior has the following parameters:

- When Tracking Stops controls how the puppet moves when the camera cannot track your body, such as if you moved out of view. Hold in place keeps the untracked body parts at the same place that they were last tracked. Return to rest moves the untracked body parts gradually to their original locations; use with the Return Speed parameter. Snap to rest moves them quickly to their original locations.

- Return Speed controls how quickly untracked body parts return to their resting locations. This setting is available when the When Tracking Stops parameter is set to Return to rest.

- Tracked Handles controls which of the tagged handles should be controlled by the Body Tracker. There are separate controls for the neck, the joints on the left and right arms, and the joints on the left and right legs.

Troubleshooting

If you’re getting unexpected tracking results, try calibrating the Body Tracker, as follows:

- Click the Set Rest Pose button in the Camera & Microphone panel; when Body Tracker Input is on, Set Rest Pose will show a five-second countdown so that you can move back from the camera to get your entire body into view

- Click the Refresh Scene button (

) in the Scene panel, when Body Tracker Input is on

- Turn on Camera Input, when Body Tracker Input is also on

- Turn on Body Tracker Input

The overall positioning of the character comes from face tracking. As such, if you notice issues such as floating feet, choppy movement, and/or not enough horizontal movement, consider checking the following:

- lighting on your face

- starting head position when you click Set Rest Pose

- Face behavior’s Head Position Strength setting

As with face tracking, the darker lighting conditions in the room can reduce the quality of the tracking and introduce jittery movement. Try to have adequate lighting when tracking your body.

If the arms are not moving, make sure both of your shoulders are being tracked in the camera.

Known issues and limitations

Body Tracker is still in development.

In this first public Beta version (v4.3.0.38), please note the following:

- Foot position might slide or jump around unexpectedly.

- The Walk and Body behaviors do not cooperate well since they are both trying to operate on the same handles simultaneously.

- When your body moves outside of the camera’s view, the bone structure overlay stays visible in the Camera & Microphone panel

What we want to know

We want to hear about your experience with Body Tracking:

- What are your overall impressions?

- Are you able to control your puppet’s movement the way you want?

- Have you found interesting ways to use the Body Tracker?

- Which Body behavior parameters and handles did you find useful or not useful?

- How can we improve the Body Tracker?

Also, we’d love to see what you create with Body Tracker. Share your animations on social media with the #CharacterAnimator hashtag.

Thank you! We’re looking forward to your feedback.

(Use this Beta forum thread to discuss the Body Tracker and share your feedback with the Character Animator team and other Beta users. If you encounter a bug, let us know by posting a reply here or choosing “Report a bug” from the “Provide feedback” icon in the top-right corner of the app.)

Copy link to clipboard

Copied

Thanks for the feedback! This is something we are actively looking into - we have an internal parameter that can control latency vs. smoothness, but it's still a WIP. We're hopeful we can give users more control over this in the coming months, so please stay tuned!

Copy link to clipboard

Copied

This is awesome!

Had a little trouble getting the body tracking set up. Then I watched your video. It's a behavior! OF COURSE! I added the behavior and it is working now!

I really like it!

Where/how do we deliver feedback?!

🙂

Copy link to clipboard

Copied

Thanks! You can share your feedback here!

Copy link to clipboard

Copied

Copy link to clipboard

Copied

Still only really successful using Body Tracker with the frog example puppet. After putting some of your above suggestions to use, I was able to get "Maddy" almost there and "Dr. Applesmith" too, all but some funky leg gyrations and slippage now and then, so we're getting closer to some level of competence.

Copy link to clipboard

Copied

Hi, I'm enjoying the new beta. One issue I am having is that when i move my characters arms (using my arms on the body tracking) the hands take a little while to orient themselves to the same angle as the lower arm.

Is there a setting somewhere that I can adjust this to speed it up a bit?

At the moment I bring the arm up to do a wave and that matches the speed of my arm on the webcam but then the hand is bent at 90 degrees and sloooowly rotates to be the correct way up to wave.

Probably something obvious, but it seems to be smoothing that movement and I can't find out where.

Thanks

Copy link to clipboard

Copied

Latency is a known issue in the current build. We are working on ways to fix this. For example, a poitential "smoothness" control that lets you control how responsive the camera is, with 0% being low latency but potentially more jittery, and 100% being smoother but having more latency.

If it's possible to send a video of what's happening, even if it's just a cameraphone shot of your screen, that would help us a lot. You can post here or DM it to me.

Copy link to clipboard

Copied

Thanks for the reply! 🙂

I've uploaded a terrible clip on my phone to youtube:

https://www.youtube.com/watch?v=4oKvgOHU0ck

Ignore the character's right hand behind his back, I had to hold the phone at the same time!

The issue I'm referring to is when I raise my hand, the camera tracks the character's left arm pretty well (and even better when I'm not trying to film at the same time!) but the hand (on its own layer) seems very slow to catch up.

I understand that hands/fingers arent being captured yet, so I assume the hand layer is set to reorient itself to the same angle as the lower arm, but it seems very slow to get there! It might just be a slider or setting that I've done wrong?

Hopefully the video shows what I mean

So for something like a wave, the hand ends up out of sync with the arm.

Thanks 🙂

Copy link to clipboard

Copied

Copy link to clipboard

Copied

Thank you!

I usually work in 3D (in Blender specifically)

but I've not found a webcam and lipsyncing control system for controlling characters natively in Blender that is anywhere close to as straight forward and quick to implement as Character Animator.

So I've started trying rendering each of the characters body parts out from Blender and building the puppet in Photoshop then bringing that into Character Animator.

of course the puppet doesnt react to lighting and camera work in the same way as I could do in Blender, but for characters not walking around and talking to camera it's such a good way to do things!

Copy link to clipboard

Copied

Thanks so much, this is super helpful to see! We've seen this come up in a few other body tracked puppets...I'm guessing this behavior happens if you just dragged them as well? For this, you may want to try either a) making the forearm stick go all the way to the tips of the fingers, to give it more rigidity, and possible also b) move the wrist/draggable handle closer to the ends of the fingers. In a lot of my puppets I end up putting the wrist/draggable tags inside the palm of the hand.

Let me know if that works or not! This is really helpful to me in thinking about how we teach people to rig their puppets when this does ship in the full release.

Copy link to clipboard

Copied

Thank you! (and thanks for all the youtube videos!)

That is a super helpful suggestion regarding the forearm stick / wrist handle. I will give it a try and report back!

Interestingly it isn't as slow to catch up when I drag the hand with the mouse,

but I think you'd already touched on why...I always put the draggable handle in the palm or at the start of the fingers, because i then click on the character's hand and control that with the mouse and it works pretty well most of the time including on waves as I'm using the palm as the control point.

On this body tracking enabled version of the puppet I had the draggable on the palm but I had put the wrist handle on the actual wrist position of the character (because I thought it needed to go pretty much exactly where the name suggests!)

Now I'm pondering whether some of the other handles don't belong exactly on their anatomical positions? (But then again, the elbows and shoulders seem fine where they are!) 🙂

Copy link to clipboard

Copied

Do you think the speed of the computer and/or the size of your puppet layers have anything to do with the latency...?

Scott

Copy link to clipboard

Copied

My puppet size is pretty big I think (the PSD is 2500px square because I wanted to do closeups with the same puppet) but in this case it was down to where the joints were positioned,

as the arm waves pretty well now that I've moved them as recommended 🙂

Copy link to clipboard

Copied

OK, so that worked really well! Quickest fix ever!

Thanks so much!

Copy link to clipboard

Copied

Hi,

I am hoping you will have Body Tracker see hand and finger shapes as well.

I need to make dozens of musical hand signs at different arm heights for nursery rhymes,

such as: OK, thumbs up, together in prayer, spider crawling, rain falling, etc..

Is there a way to do that now, w/out manually rigging?

Copy link to clipboard

Copied

Thanks for the feedback! This will not be for v1, but it's something we're thinking more about for the future. For now, you will still need to rig the different hand positions and trigger them. Or play around with third party stuff - someone on our team acually used Nintendo Switch controllers + JoyToKey on PC to make remote trigger buttons!

Copy link to clipboard

Copied

Can we film the action beforehand, and bring the footage into character animation and than body track the footage, rather than track live movement? If not, that would be a valuable feature for our organization.

Copy link to clipboard

Copied

Ch doesn’t support that directly, but as a workaround you can use a tool like https://manycam.com/ to feed a prerecorded video file into a virtual webcam that Ch thinks is live. I think the free version can do this, at least it used to.

Copy link to clipboard

Copied

Dear body tracker beta testers,

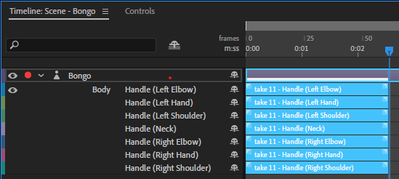

With the new build just went out today (v4.4.0.31 2021-06-14), the body tracker records a take per handle. Any opinions on this change deeply appreciated. On your Creative Cloud Desktop app, please go Help > Check for Updates to make sure you get the latest build. Thank you!

Copy link to clipboard

Copied

Hello. I have two questions each of which might be stupid - and I'm not even sure that I've posted this in the correct place - but a). I'm terrible with these forum thingies, and b). I don't usually try beta versions of stuff.

I just discovered the Body Tracking Beta Feature when searching YT videos for CH lip syncing tutorials. It looks amazing and I'm going to try it.

My questions are:

1. As far as anyone at Adobe currently knows, is the CH Body Tracking Beta Feature going to continue to be available until its full release? In other words: There are no plans to make it unavailable before the full release - even if someone discovers an awful bug or something, is that correct? I want to try it for a big project that might take months, and I just want to make sure the odds are that it won't suddenly become unavailable as I'm using it for this project. From what I can tell, this is the case; I just want to confirm.

2. The preview video at https://www.youtube.com/watch?v=pCKMpTE4Cu8 says you can record your full-body animations. Does this mean that you can import these recordings into other apps and use / export them at full capacity - with no watermarks or anything? I think it does; I just want to confirm. Again, I just want to make sure the odds are that I can use the beta version for a big project without any unforeseen hiccups.

Thanks. If someone from Adobe answers, I promise I'll leave feedback on the feature, haha. 🙂

Copy link to clipboard

Copied

1. Yes - right now based off the feedback we're getting, body tracking will make it to the shipping version eventually. There may be changes in how it's implemented, but the feature as a whole will definitely be making it in. We have no announcements of the timeframe for this yet.

2. The body tracking is for CH, so it's used specifically for moving your animated characters around. There is no easy way in v1 to export the raw tracking data from CH to other apps / filetypes. I have seen some imaginative beta testers make puppets that are essentially stick figures with tracking dots that they're using elsewhere, or just making the webcam panel big and doing a screen capture on it for motion tracking in After Effects or elsewhere.

Copy link to clipboard

Copied

Thanks for the reply! My questions probably weren't clear because I don't know all the jargon, but what I meant is...

1. The beta version of the Body Tracker feature will not for some reason suddenly become unavailable for testing, is this correct?

2. Yes, but it says at the top of the page that you can share what you create on social media. So I assume this means you can export the body tracker animations without watermarks or anything and then carry the exports into AE or other software.

No need to reply again unless you're on here anyway answering other questions. I'll try it and find out. Thanks!

Copy link to clipboard

Copied

1. There are no plans to remove it from the beta, it will definitely be there.

2. Yep, you can use CH without any watermarks or credit or anything.

Have fun!

Copy link to clipboard

Copied

Thanks!