- Home

- Substance 3D Designer

- Discussions

- Re: Reading details/parameters from input nodes

- Re: Reading details/parameters from input nodes

Copy link to clipboard

Copied

I have a few graphs that I've made as utilities that I use as nodes in some of my material graphs, and I am curious if there is a way to read details about those inputs within a graph. Specifically, I'm curious if it is possible to read the size of an image input into the node. i.e. I have a a utility node that accepts a few single channel image inputs that could be of different size, and I am trying to write a function that can perform some calculations based on the two input image's sizes.

I know I can do this by exposing parameters and just setting those parameters on the node when it is used, but this is rather error prone. If the size of the inputs changes the graph author needs to remember to go update these node parameters or else bad stuff happens.

So, if I had 2 inputs in my graph like image1 and image2 that in a function I could do something like GetFloat2 on image1.$size

Is this possible in a function? If not, is this possible in the python scripting api?

2 Correct answers

2 Correct answers

Hello,

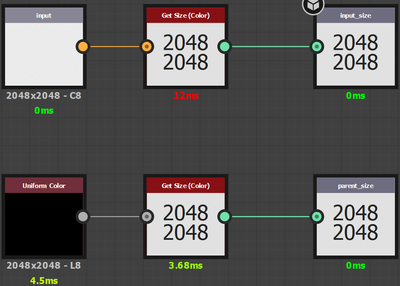

You may connect the Input nodes to Get Size nodes to extract their image size as a Float2 value. Make sure the inheritance method for the Input node's Output size and Output format properties are set to Relative to input so they inherit the data connected to them specifically.

I have attached a package with a small example of what the setup looks like and how it can be used. I hope it is helpful!

Best regards.

Hi

I think this will do what you want

Make two input nodes and make the second (input_1 on my screenshot) the primary input. Add a parameter (input_2) and set it to False. Set visibility of input to to visible if input["input_2] == true That will hide the second input. You can also set that parameter to hide itself with the same expression.

When used as a subgraph it gives this. The hidden (but primary) input is picking up the parent size :

Dave

Edit to add - I don't know why Get Siz

...Copy link to clipboard

Copied

Hello,

You may connect the Input nodes to Get Size nodes to extract their image size as a Float2 value. Make sure the inheritance method for the Input node's Output size and Output format properties are set to Relative to input so they inherit the data connected to them specifically.

I have attached a package with a small example of what the setup looks like and how it can be used. I hope it is helpful!

Best regards.

Copy link to clipboard

Copied

Many thanks Luca, this will work!

Copy link to clipboard

Copied

Quick follow up question. Is there a way to forcibly read a parent nodes Output Size property without having to have your node manually set to Relative to Parent?

For example, when you drag/drop a node into a graph, it defaults to Relative to Input, and in my case I need that data, but I also need the parent nodes Output Size property for some other calculations.

Should this be a separate post?

Copy link to clipboard

Copied

Hello again,

You may use a cheap node, such as a grayscale Uniform color, to output an image at the parent graph's resolution and use the Get Size node to get its resolution. Would that make sense?

Best regards.

Copy link to clipboard

Copied

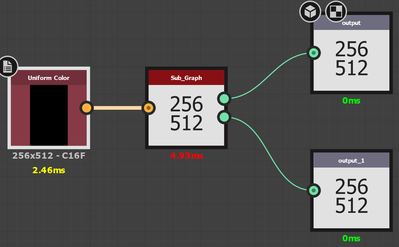

I've been trying this but I think it either won't work the way we are trying to use it, or maybe we are doing something wrong. The problem is that I'm trying to set my sub-graphs output to match the parent's output but let the rest of the nodes in the graph use the input nodes size.

So, for example, the setup: This graph used as a node where the graph it is in is set with an output of 2048x2048. The input to this node receives something less than that, like say 256x512. This node needs to output 2048 x 2048 to match the graph, but internally it needs to use the 256 x 512 for a lot of other nodes inputs.

Here is a simple graph demonstrating the problem:

Sub-Graph used as a node in another graph:

The input - Get Size - Output is doing the Get Size on any input nodes.

The Uniform Color - Get Size - Output is doing what you suggested.

Here it is used within another graph:

The uniform color node represents some specific sized nodes and is the input into the sub graph above. The first green output is the top part of the previous screen shot, and the bottom output is the one from the bottom.

My expectation (or what I am trying to do) is make it such that the top should be the 256x512 and the bottom should be the 2048x2048 that this graph is set at.

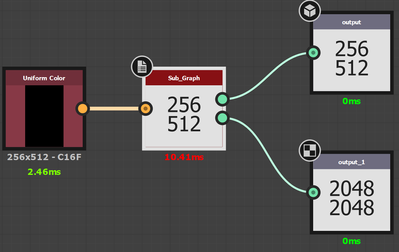

The "Sub_Graph" node is just default dragged/dropped into the graph, but if I manually change it's output size parameter to Relative to Parent:

What I'm hoping to do here, is avoid making the mistake of forgetting to change the "Sub_Graph" node's base paramters Output Size.

I'm thinking it isn't possible?

Copy link to clipboard

Copied

Hi

I think this will do what you want

Make two input nodes and make the second (input_1 on my screenshot) the primary input. Add a parameter (input_2) and set it to False. Set visibility of input to to visible if input["input_2] == true That will hide the second input. You can also set that parameter to hide itself with the same expression.

When used as a subgraph it gives this. The hidden (but primary) input is picking up the parent size :

Dave

Edit to add - I don't know why Get Size (Greyscale) is labelled Get Size (Color) it looks like a typo in the node programming

Dave

Copy link to clipboard

Copied

Thanks Dave. Reproducing what you have does appear to be working. I'll try and put it into our graph and see if there are any other issues. The community/support here is awesome btw. Thanks again.

Copy link to clipboard

Copied

Hi @davescm,

Indeed, the Get Size Grayscale node label uses the (Color) suffix. This is now fixed for our next bugfix release. The node's metasdata was also set up in a way which prevented it from appearing in the Library. This is fixed as well and the node should be listed in the same category as the Min Max node.

Best regards.

Copy link to clipboard

Copied

Excellent - thanks Luca

Dave

Get ready! An upgraded Adobe Community experience is coming in January.

Learn more