- Home

- Lightroom Classic

- Discussions

- Re: GPU notes for Lightroom CC (2015)

- Re: GPU notes for Lightroom CC (2015)

GPU notes for Lightroom CC (2015)

Copy link to clipboard

Copied

Hi everyone,

I wanted to share some additional information regarding GPU support in Lr CC.

Lr can now use graphics processors (GPUs) to accelerate interactive image editing in Develop. A big reason that we started here is the recent development and increased availability of high-res displays, such as 4K and 5K monitors. To give you some numbers: a standard HD screen is 2 megapixels (MP), a MacBook Retina Pro 15" is 5 MP, a 4K display is 8 MP, and a 5K display is a whopping 15 MP. This means on a 4K display we need to render and display 4 times as many pixels as on a standard HD display. Using the GPU can provide a significant speedup (10x or more) on high-res displays. The bigger the screen, the bigger the win.

For example, on my test system with a 4K display, adjusting the White Balance and Exposure sliders in Lightroom 5.7 (without GPU support) is about 5 frames/second -- manageable, but choppy and hard to control. The same sliders in Lightroom 6.0 now run smoothly at 60 FPS.

So why doesn't everything feel faster?

Well, GPUs can be enormously helpful in speeding up many tasks. But they're complex and involve some tradeoffs, which I'd like to take a moment to explain.

First, rewriting software to take full advantage of GPUs is a lot of work and takes time. Especially for software like Lightroom, which offers a rich feature set developed over many years and release versions. So the first tradeoff is that, for this particular version of Lightroom, we weren't able to take advantage of the GPU to speed up everything. Given our limited time, we needed to pick and choose specific areas of Lightroom to optimize. The area that I started with was interactive image editing in Develop, and even then, I didn't manage to speed up everything yet (more on this later).

Second, GPUs are marvelous at high-speed computation, but there's some overhead. For example, it takes time to get data from the main processor (CPU) over to the GPU. In the case of high-res images and big screens, that can take a LOT of time. This means that some operations may actually take longer when using the GPU, such as the time to load the full-resolution image, and the time to switch from one image to another.

Third, GPUs aren't best for everything. For example, decompressing sequential bits of data from a file -- like most raw files, for instance -- sees little to no benefit from a GPU implementation.

Fourth, Lightroom has a sophisticated raw processing pipeline (such as tone mapping HDR images with Highlights and Shadows), and running this efficiently on a GPU requires a fairly powerful GPU. Cards that may work with in the Photoshop app itself may not necessarily work with Lightroom. While cards that are 4 to 5 years old may technically work, they may provide little to no benefit over the regular CPU when processing images in Lr, and in some cases may be slower. Higher-end GPUs from the last 2 to 3 years should work better.

So let's clear up what's currently GPU accelerated in Lr CC and what's not:

First of all, Develop is the only module that currently has GPU acceleration whatsoever. This means that other functions and modules, such as Library, Export, and Quick Develop, do not use the GPU (performance should be the same for those functions regardless of whether you have GPU enabled or disabled in the prefs).

Within Develop, most image editing controls have full GPU acceleration, including the basic and tone panel, panning and zooming, crop and straighten, lens corrections, gradients, and radial filter. Some controls, such as local brush adjustments and spot clone/heal, do not -- at least, not yet.

While the above description may be disappointing to some of you, let's be clear: This is the beginning of the GPU story for Lightroom, not the end. The vision here is to expand our use of the GPU and other technologies over time to improve performance. I know that many photographers have been asking us for improved performance for a long time, and we're trying to respond to that. Please understand this is a big step in that direction, but it's just the first step. The rest of it will take some time.

Summary:

1. GPU support is currently available in Develop only.

2. Most (but not all) Develop controls benefit from GPU acceleration.

3. Using the GPU involves some overhead (there's no free lunch). This may make some operations take longer, such as image-to-image switching or zooming to 1:1. Newer GPUs and computer systems minimize this overhead.

4. The GPU performance improvement in Develop is more noticeable on higher-resolution displays such as 4K. The bigger the display, the bigger the win.

5. Prefer newer GPUs (faster models within the last 3 years). Lightroom may technically work on older GPUs (4 to 5 years old) but likely will not benefit much. At least 1 GB of GPU memory. 2 GB is better.

6. We're currently investigating using GPUs and other technologies to improve performance in Develop and other areas of the app going forward.

The above notes also apply to Camera Raw 9.0 for Photoshop/Bridge CC.

Eric Chan

Camera Raw Engineer

Copy link to clipboard

Copied

Soooo jealous of those monitors! 🙂

I remember seeing the rest of your specs before. Did you try physically taking two of your 980s out to test with just one? If you do, leave the one closest to the CPU in there and take out the two further away. If that does anything, it might be worth it for you to trade those three cards in for a single Quadro card. The GTX cards, while fast, don't support the full color range of your monitors. People with wide gamut displays are one of only two groups I ever suggest that "upgrading" from GTX to Quadro might be worth it. I put that in quotes because the Quadros have the same chip as the GTX cards, but they cost about 4-times more because advanced features (e.g. 10-bit color) are enabled on Quadro cards but disabled on GTX cards.

The newest and best Quadro M6000 has the GM200 chip, which is the same high-end chip in the GTX 980 ti and Titan X (not the 980, which has a GM 204). It's basically a Titan X, which costs $1,000, that has 10-bit color support, making it cost about five-times more at $5,000 (link to New Egg - > PNY Quadro M6000 VCQM6000-PB 12GB 384-bit GDDR5 PCI Express 3.0 x16 10.5" x 4.4", Dual Slot Workstat...). Unfortunate pricing scheme they have - indeed - but they market to institutions rather than individuals. The good new, though, is that the M6000 is the most expensive Quadro, and others are cheaper with 10-bit support. They just will be slower than one of the 980 cards you have now. Whether that matters depends on if you play games or do heavy 3D content editing, since it wouldn't slow you down much for LR and PS.

In any case, having a GTX card on a 10-bit display isn't that much of an issue at all. They just won't have all of the subtlety you paid for. But the displays will still use the additional color range to approximate better color from an 8-bit signal, and they will look pretty damn good.

Copy link to clipboard

Copied

You know I haven't actually physically removed the additional cards - I'll give it a shot. Wish it was easy to "trade in" the GTX 980s for a quadro, I honestly didn't know that the GTX 980s didn't support 10-bit color until you just mentioned it, so that would have been a great option, but not offered on the alienware configuration.

Copy link to clipboard

Copied

Yeah, stuff like that gets buried deep in spec sheets that bigger computer system companies usually don't provide. I've been building custom systems particularly for content creators for a while now. I'll shoot you a message to put us in contact in case you or anyone you know has a need. Talking to someone for such a specialized use helps a lot because of all the technical details involved with part matching.

Copy link to clipboard

Copied

Well, you are extremely lucky. But that probably means there is hope for the rest of us when Adobe discover why it is so slow in some other state of the art machines like an HP Z820 with 128 MB of RAM and a Quadro 6000 or a Titan Black... Where I can't even have 1 stroke of a brush without having a lag of about 2 seconds.

Sent from the Moon

Copy link to clipboard

Copied

A note for quadro 6000 / 4000 etc users; they are fermi based so essentially a geforce 480 / 460 from the beginning of 2010 with the same amount of power. I would not expect much of them.

Wolansky, what are the total specs of your Z820?

Copy link to clipboard

Copied

I only have a Quadro K2000, but it has seemed far superior to the usual run of NVidia or Radeon gaming cards. The essential difference seems to be in the drivers, not the cards so much. The Quadro drivers are designed for reliability, not gaming. I used to have problems with earlier versions of LR and NVidia or Radeon gaming cards; we used to have to tweak the driver settings to get them to work properly with LR. I finally switched to the lower-end Quadros and have had no trouble at all since then. Worth every penny, IMO.

Bob Frost

Copy link to clipboard

Copied

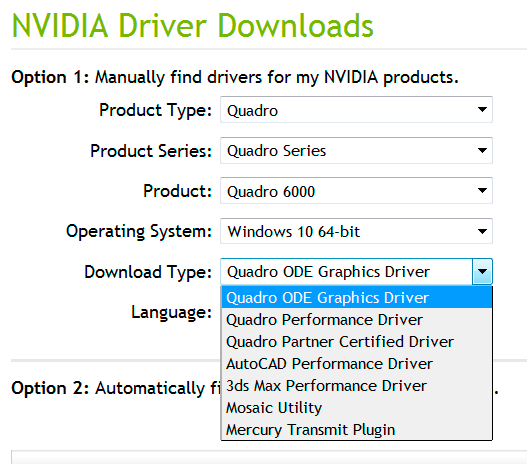

The GeForce boards have one driver type (Game Ready Driver), which is optimized for gaming.

The Nvidia Quadro drivers support a much wider range of applications, but it's important that you choose the most suitable Download Type. The Quadro ODE driver (Optimal Driver for Enterprise) is the best choice for LR. The Performance driver is the latest version of the driver. It's a Beta driver, which gets moved up as the ODE download after extensive field testing and bug fixes. So clearly not what you want to use with an app like LR. I have no stability issues with my older and very low-end Quadro 600 board using the latest ODE driver.

Copy link to clipboard

Copied

Agreed; I use the Quadro ODE drivers.

bob Frost

Copy link to clipboard

Copied

Quadro M6000 not 6000 sorry. Also tested with a GTX 980 Titan X

128 GB of RAM, have PCIe SSD which gives me about 1300 MB/s, not sure what else on the machine would make a difference. The software is just slower than before, GPU on or not. It's better now, but still slower than the good old 5.7.1

Sent from the Moon

Copy link to clipboard

Copied

How would you do this with a MacBook.

All these comments are not Adobe going to sirt it out? I have a 2015 Macbook Pro.... surely thats a good rig to do what we expect the software to do?

Copy link to clipboard

Copied

I'm not sure if anyone else has had issues with the latest AMD driver update (7/8/2015) I installed it and began having issues with Lightroom "Not Responding" and would have to exit the program, I ended up rolling back to the previous driver version from 2014 and it seems to be back to normal, just FYI

Copy link to clipboard

Copied

I've just installed video driver 15.2 and now lightroom fails every time. The gpu acceleration passes it startup test and can be seen in the preformance tab as being operational but the rest of the program won't work. I can't switch modules, or open a library or view a different image or anything. I can startup lightroom but as soon as I attempt to use anything - the whole thing hangs and has to be shut down.

Do not attempt to use the updated driver! I'm running a Radeon 6800 by the way.

Copy link to clipboard

Copied

Eric,

Is one Manufacturer better than another?

I have a Radeon R9 8 GB DDR5 and 2 GPU's. I have seen some increase in performance however I did expect to see more. My Laptop has 4 GB DDR5 using NVidia GPU and the speed difference is awesome with a single GPU.

Copy link to clipboard

Copied

Hi Chan, I already red all that stuff few years ago. I'm frankly quite annoyed about this partial GPU compatibility to still read the same stuff. Lot of work, yes, that's what makes a program, not magic. What are Adobe People waiting for.

Thanks,

س

Copy link to clipboard

Copied

Lightroom is far away to be fully compatible with the GPU, as Aperture use to do; it uses almost 100% of the VRAM and the GPU but Lightroom only for some silly tasks like zooming. The Adobe Enginering Chan or Chen, said in 2015 (and also, many years ago) thhe same speech: hardware isn't prepared yet. There is logic on that if you read the full speech, but I frankly give a shit logics at this moment.

Copy link to clipboard

Copied

I'm having one heck of a dilemma here.

I recently purchased the canon 5dsr as a bride/groom portrait only camera body, I discovered that I needed to upgrade to LR 6 or CC in order to edit these images. Well, with doing so, everything slowed massively down. I assumed it was due to the file size of the Canon 5dsr images. But, then checking my Mark III images, they were equally as slow.

Well, I figured, why the heck not, let's go crazy and purchase the new iMac Retina 5k at 3.5ghz 32gigs of ram and the 1tb fusion drive... SO now what's the issue? I can't seem to speed up CC for the life of me, I'm getting frustrated beyond belief that I have spent $7000 to lose edit time and still not have a solution.

Adobe, I don't understand the point of an "UPGRADE" if it's going to kill me when I edit. The blur between images, the loading time, the lag with the bars, it's exhausting.

What am I doing wrong? Everything is top of the line and I can't edit worth a dink vs LR 5.7 (which I loved)

So please, please, help me here and tell me how to optimize cc 2015 in order to get the best bang for my buck. I kind of deserve it.

Copy link to clipboard

Copied

joshuam4396817 wrote:

I can't seem to speed up CC for the life of me

We'll need more specifics. WHAT is slower? Where is the catalog stored? Where are the images stored? Is GPU enabled in Prefs > Performance or not? What settings are you applying by default?

Victoria - The Lightroom Queen - Author of the Lightroom Missing FAQ & Edit on the Go books.

Copy link to clipboard

Copied

Victoria,

I have tried everything. Lightroom is slower by 35% if I were to put a number on it. I'm talking about editing. It's a brand new iMac, I have attempted with loading one file from my canon mark iii and one file from canon 5dsr - both are almost equally as slow. GPU has been disabled and enabled, I have changed preview sizes and quality, I have changed the storage location of the files to the desktop with both the cache. I have tried build smart preview over not. I have updated everything.

CC is the problem. I went from Lightroom 5.7 and because of this move because they don't make the DNG/ACR file that is needed for 5.7 and I am forced to upgrade to lightroom 6 or CC in order to edit the camera raw images from the canon 5dsr I am at a complete loss. I have no idea what to do to solve the problem.

It can take anywhere from 4-6 seconds to transition between images, after making an edit with the slider, such as the color, instead of it gliding smoothly, I have to click where I want it and then wait for an additional 3 - 5 seconds. When I make changes with any of the other "basic" sliders, it takes about 2 seconds per transition. When I make changes with tone curve, it takes 3-5 seconds. These are seconds, that I honestly don't have the time to wait for.

CC is causing is doubling my work flow time. It used to take me 4 hours to edit 500 images, now it's pushing past 8. This is probably the most ridiculous "upgrade" i have ever seen. I feel CC shouldn't even be out yet if these problems exist. It feels more like a cheap program that Adobe wanted to roll out and get subscribers on with the promise of fixing these things down the road.

GPU is a joke, especially when I have this supposed mega computer that I can't even use for it because it's a 5k. But it was made for a 5k. So, now I'm forced to return the 5k, keep my late 2013 model, re-load lightroom 5.7 for my mark iii images and figure out a different route for the canon 5dsr. Because CC just doesn't cut it for the working full time professional.

Any solutions will help, but I have tried everything.

Copy link to clipboard

Copied

joshuam4396817 wrote:

GPU is a joke, especially when I have this supposed mega computer that I can't even use for it because it's a 5k.

See that's what's confusing me, as I have the same machine - very slightly faster processor and SSD instead of fusion - and I'm not seeing those kind of timings. With the GPU on, initial loading time is slightly slower while it passes the data to the GPU, but that's worth leaving on because of the 5K screen. I don't have any Canon 5DSR files (I tested some other big ones like Nikon D800 + Canon 5DMk3), but if you'd like to send me some using www.wetransfer.com, I'd be happy to try it. I'm away from Tuesday, but that gives you a couple of days to send some.

Just to check, you are aware that the free standalone DNG converter can convert the new files for use with 5.7 too? Might be worth trying as a test for comparison, as the 5DSR files are obviously much bigger than you were used to working with. There are other options too, that might be worth considering for these huge files, such as creating smart previews overnight and then taking the originals offline. You should find those much faster to work with, as they're a much lower file size.

Victoria - The Lightroom Queen - Author of the Lightroom Missing FAQ & Edit on the Go books.

Copy link to clipboard

Copied

it is not becuase of the size of the files..... I work with a Pentax 645Z, 50 Megapixels too.... LR 5.7.1 moves those files very easy, pretty fast, no effort.... LR CC 2015 is SLOOOOOOWWWWW.... Adobe just have to get that piece of software back to the design table and start over...

Copy link to clipboard

Copied

I'd like to use a GPU but I don't even know how you guys enabled this in LR 5.7.1 I don't show a performance tab.

seems like most people think you need 6.0 to use a GPU

Copy link to clipboard

Copied

5.7.1 does not have that option.

Sent from the Moon

Copy link to clipboard

Copied

credit where is deserved.... just installed today's update, and works MUCH better with GPU ON, its actually usable!!!!

Copy link to clipboard

Copied

How do you like the new Import section?

Copy link to clipboard

Copied

Have not used it yet. Only using the CC version with stuff I shot with the 5DSR since I can't load it in 5.7.1. But will give it a try now with the next job.

Sent from the Moon

Find more inspiration, events, and resources on the new Adobe Community

Explore Now