Does the efficiency of AI denoise decrease when used on a sequence of thousands of images?

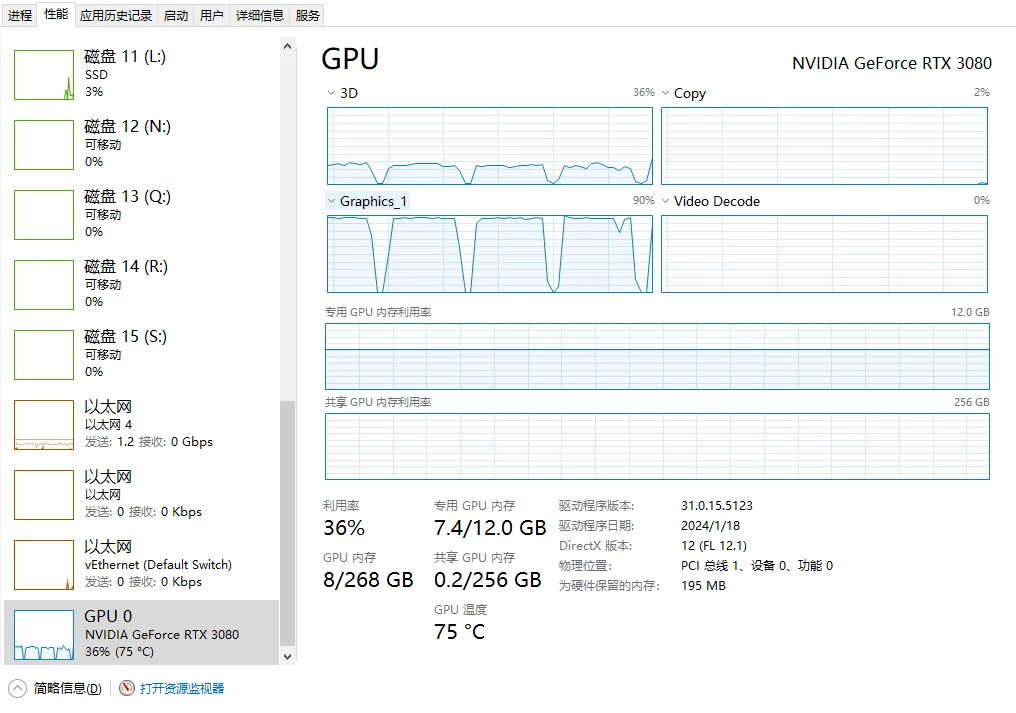

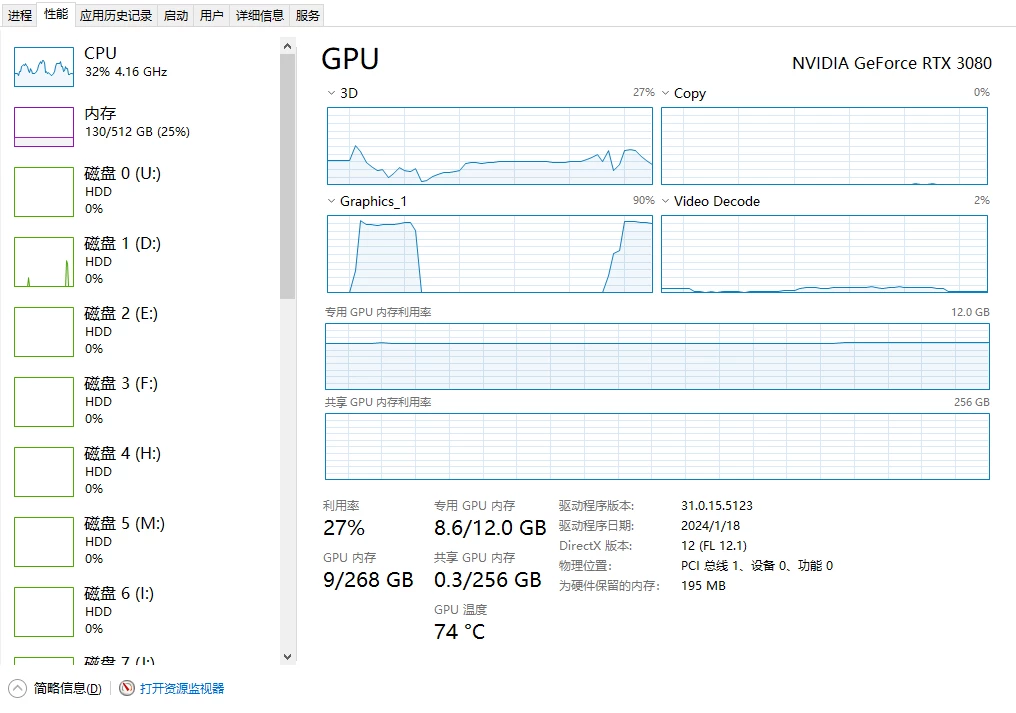

Hello Adobe technician, I have encountered a problem when using the AI noise reduction feature in PS and Lightroom. When I am doing time-lapse photography and applying AI noise reduction to a sequence of two to three thousand images, the efficiency starts off very high. However, after a period of time, the work efficiency gradually decreases, and I notice that the intervals of GPU operation are getting longer. Only by restarting the computer can the GPU operation intervals return to their initial state, but the intervals will gradually lengthen again afterwards. This issue occurs on all three of my computers.

I would like to ask what might be causing this?

Are there any ways to improve this situation?

Additionally, my motherboard supports multiple graphics cards, so how can I increase the efficiency of AI noise reduction?

thanks