Adobe Community

Adobe Community

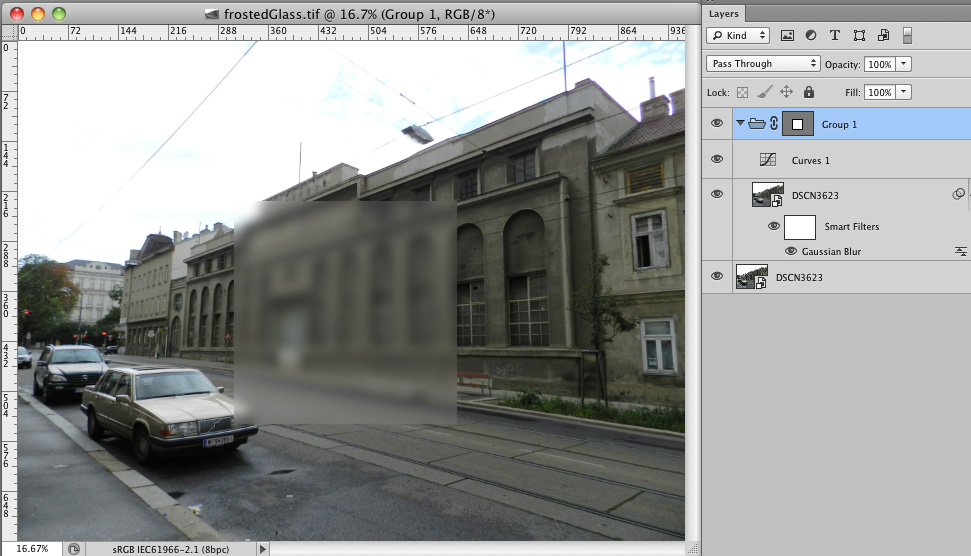

Frosted glass..

Copy link to clipboard

Copied

Hello everyone!

How can i make frosted glass effect like a independent layer, which i can use with any picture. It should look like smart object with smart filter, but i won't convert every picture to smart object, because my psd documend include many picture. Anyway i will be able to use this filter on any place for every picture.

Thank you ...

Explore related tutorials & articles

Copy link to clipboard

Copied

If the Filter>Distort>Glass gives the effect you want, you can use Layer>Smart Objects>Replace Contents to insert a different photo

while keeping the effect.

Copy link to clipboard

Copied

How can i make frosted glass effect like a independent layer,

Basically you can’t (if you and I have similar assumptions on what the effect should look like, posting an example might help clarify).

Adjustment Layers affect each pixel on its own, so I think at least one Filter application is necessary.

Your reasoning for not using a Smart Filter seems unclear to me; if by »many pictures« you mean many Layers those could be converted to one SO.

Copy link to clipboard

Copied

The short answer is that you can't, at least not exactly as you've stated. That said, there's no reason to think that a filter or some other series of steps can't be found or assigned to a function key that would allow you to create the effect easily on whatever image document you have open.

It sounds, though, like what you're hoping for is some kind of magic mixture of layer contents, blending mode, and layer styles that will give you the effect when that layer is pasted over the top of anything else, and I don't believe that's possible.

-Noel

Copy link to clipboard

Copied

Thanks all for replying! And sorry for my language..:)

c.pfaffenbichler ,R.Kelly ...yes i may many layers, but i've big poster, and i can't merge and convert all layers, and i want moving this fragment of frosted glass on all surface of the poster.

Noel But it's not hard for Adobe to make some tools like a Adjustment layer, simple layer with some filters, which applying effect for layers under itself.

Copy link to clipboard

Copied

Noel But it's not hard for Adobe to make some tools like a Adjustment layer, simple layer with some filters, which applying effect for layers under itself.

You would appear to be wrong.

As mentioned above Adjustment Layers work pixel for pixel and can therefore operate relatively fast.

Filters can produce results for each pixel that are dependent on huge numbers of other pixels and are therefore more calculation-intensive. (Edit: Or rather »can be«, naturally a Filter can also operate on a pixel by pixel basis.)

Edit:

Copy link to clipboard

Copied

c.pfaffenbichler wrote:

Noel But it's not hard for Adobe to make some tools like a Adjustment layer, simple layer with some filters, which applying effect for layers under itself.

You would appear to be wrong.

As mentioned above Adjustment Layers work pixel for pixel and can therefore operate relatively fast.

Filters can produce results for each pixel that are dependent on huge numbers of other pixels and are therefore more calculation-intensive.

Edit:

Photoshop filters are hundreds to thousands of times slower than they need be. There are alternative apps costing under $50 dollars that provide stacked filters with which you interact with no perceptible lag and without any of the stack being temporarily disabled. And they do that without the need to create a "Smart Object" - you can paint, or otherwise make changes to layers, while the image is simultaneously filtered by an overlying stack of nondestructive filters.

The problem with Photoshop's ancient filters is that they were designed and coded for the limitations of the CPUs of 20+ years ago. Smart Filters on Smart Objects can be superseded by interactive nondestructive filter layers if the GPU were harnessed for the filters.

The GPU can be harnessed to produced amazing effecs with no lag, as is seen in the new blur filters such as Tilt-Shift. Other apps are taking advantage of that power for all filters, plus provide far more control to the user than is available in Photoshop's relics.

There's all this reliance on a modern GPU for modern Photoshop to be runnable, yet the GPU lies untapped for many operations which can greatly benefit and provide a better user experience.

Copy link to clipboard

Copied

Photoshop filters are hundreds to thousands of times slower than they need be. There are alternative apps costing under $50 dollars that provide stacked filters with which you interact with no perceptible lag and without any of the stack being temporarily disabled.

What pixel dimensions, bit depths and layer (or similar structures) numbers are you talking about?

And which applications – maybe the OP would prefer to work with one of those?

Copy link to clipboard

Copied

Christoph, I'm talking about the difference in performance between filters evaluated by the GPU versus the CPU when the pixel dimensions and bit-depth are constant. The GPU is custom hardware - it can be thousands of times faster than a general purpose CPU.

I'm not going to mention the names of competitor apps here in an Adobe hosted forum.

Photoshop is miles ahead of other image editing apps in many respects, otherwise I wouldn't be using it and spending time contributing in this forum, but there are some aspects of the user experience which are a relic from decades ago. A more full utilisation of the GPU for the unsexy standard features would not only greatly improve Photoshop as it is now, but would enable the building of features which are not feasible without GPU support.

Copy link to clipboard

Copied

Perhaps not thousands, but I'll agree with hundreds of times faster (I'm just splitting hairs).

This is based on my own code and practical experience with accelerating algorithms via GPU.

FYI it is still easy to dream up GPU operations that are actually visibly slow, even with the use of OpenGL, shaders, and whatnot. Those that operate on hundreds of pixels for each of millions of pixels (such as what a Frosted Glass filter might do) can easily still bog a modern GPU down, simply because even GPU RAM access speed is not infinite.

And it's not all a panacea yet for another reason: Modern CPUs can now access virtually unlimited RAM, and a new Photoshop powerhouse might have 24 or 48 GB of RAM on it, but you still don't see GPUs much with more than 1 to 2 GB. So chunking through data on a big image involves a lot of moving of data between CPU and GPU.

Lastly, GPU programming isn't exactly trivial or mature yet.

But with Adobe pushing forward into the arena, and others braving the waters as well, the future for accelerated computing is bright. Now if only the OS makers could continue making serious OSs to run the GPU-acclerated workstations of next year and beyond.

-Noel

Copy link to clipboard

Copied

"Now if only the OS makers could continue making serious OSs to run the GPU-acclerated workstations of next year and beyond."

This isn't going to help Photoshop since Ps is cross-platform, but take a look at frameworks for graphics that are provided with OS X.

http://www.google.com/search?client=safari&rls=en&q=core+image

http://www.google.com/search?client=safari&rls=en&q=core+graphics

I don't think the converging of "real computers" and mobile devices is going to be the dumbing-down disaster that some are predicting, at least not with Apple. Lion and Mountain Lion have inherited a handful features from iOS, yes, but they remain OS X with its rich APIs and I'm not expecting Apple to abandon "serious" computing any time soon.

Copy link to clipboard

Copied

but there are some aspects of the user experience which are a relic from decades ago.

Undeniably. (see the interface for Radial Blur …)

I suppose the Blur Gallery was kind of a test balloon, so hopefully coming versions may live up to your expectations for Filters’ performance better.

But (without insider knowledge) I would not expect the Filter Layer approach to be implemented anytime soon – and even if they should be working on it I expect Adobe employees will not be able to speak on the issue much because of the »no comments on upcoming versions«-policy.

In any case I see no viable option in Photoshop for the OP’s workflow demands currently.

Copy link to clipboard

Copied

You might want to learn more about GPUs and GPU programming before claiming that GPUs are a panacea.

GPUs are good at some things, and really horrid at others.

Many of the Photoshop filters would be SLOWER implemented on current and foreseeable GPUs (we've tried).

And many of the filters that might speed up need OpenCL to work well, and that's only recently stabilized (well, mostly stabilized).

Copy link to clipboard

Copied

Chris, why are you, an adobe representative as well as an engineer, being so rude?

I never said any such thing like GPUs being a panacea!

What I do have experience of is other applications whose filters run hundreds of times faster on my computer than the equivalent Photoshop filters while producing results which are as good and sometimes better than Photoshop's. These apps are using the GPU to accelerate things which can be accelerated, and not producing miracles.

There's no need for you to feel personally insulted by my voicing a dissatisfaction with Photoshop.

Copy link to clipboard

Copied

Why are you reading rudeness into a comment that has none?

Copy link to clipboard

Copied

Your opening sentence was:

"You might want to learn more about GPUs and GPU programming before claiming that GPUs are a panacea."

It appears to be an attempt to ridicule my post with a straw man argument.

-----

Copy link to clipboard

Copied

Nope, just pointing out that you really don't appear to know about GPUs or GPU programming, and are making a broad claim that isn't substantiated by the actual capabilities of current GPUs.

Copy link to clipboard

Copied

Chris, I wasn't making a broad claim. Again, you are misrepresenting my position. I don't understand why you are continuing to do that.

I don't need to know how to program a GPU to know that I have software which uses the GPU to hugely accelerate some tasks that aren't (yet) GPU-accelerated by Photoshop. Nothing you write will change that fact into me making a claim of GPU panacea.

Copy link to clipboard

Copied

You claimed that Photoshop could use the GPU to do things that GPUs cannot do.

You claimed that GPUs can solve several problems that they do not solve.

You don't use the word panacea, but you seem to think that GPUs solve all ills, which defines panacea.

Also, see post 9.

Yes, some other applications have written GPU accelerated filters or just used Apple's GPU code.

But Photoshop isn't as simple as those applications, and has to work with larger images than most of those applications.

Copy link to clipboard

Copied

Chris Cox wrote:

You don't use the word panacea, but you seem to think that GPUs solve all ills, which defines panacea.

For heaven's sake, man!

I've explicitly stated that I do not think that GPUs are a panacea. I used the damned word PANACEA when stating that I do not regard GPUs as a panacea.

How can I make it any clearer that I do not think GPUs are a panacea?

Copy link to clipboard

Copied

Dunno how this got to be about GPUs but why not? ![]() To run code on a GPU, you have to rewrite the function or whole filter or whatever to be sent to and accept reply info from the GPU which is double the code. Then, you have to figure out the most optimized way to run it through the vastly different CPU structure. You know, like how most of several hundred cores. There's multithreaded coding for like 2-4 cores and then there's splitting up data over hundreds of cores and finishing it all at once in like a quarter second total.

To run code on a GPU, you have to rewrite the function or whole filter or whatever to be sent to and accept reply info from the GPU which is double the code. Then, you have to figure out the most optimized way to run it through the vastly different CPU structure. You know, like how most of several hundred cores. There's multithreaded coding for like 2-4 cores and then there's splitting up data over hundreds of cores and finishing it all at once in like a quarter second total.

Then there's rewriting old code from scratch. Like the reduce noise filter. GPUs don't typically take pixels, compare them to ones next to them, make a decision, then calculate a modification. A desktop CPU specializes in that. So to get that written as GPU code is scary. That time could be better used making better, smarter filters and stuff. So I can wait for my i5 to do a reduce noise for 3 seconds instead of 100ms ![]()

So yeah, they're a bit far from sending every single operation in every layer to the GPU to improve performance under the circumstances I'm hearing complaints about.

Copy link to clipboard

Copied

Sorry I used the word panacea. No accusations were intended on my part.

It occurs to me that if Photoshop were designed from the ground up today things would likely be done differently than they are (and which probably can't be practically changed without doing that ground up rewrite).

An anecdote: I was just doing some testing on one of my plug-ins with a preset that maxes out all controls to do a stress test. This embodies a large number of operations multiplied by a huge number of pixels, just as I mentioned above.

The GPU displayed the preview with the (tens of thousands of) effects the plug-in generated rendered on top of the original image in just a few seconds. The screen was basically covered. Not interactive, but not unusably slow.

When I hit [OK] to commit the effects back to the image, the plug-in switches to using the CPUs (all cores simultaneously, of which I have 8) to render the effects, since they're done in a bit higher accuracy than what's done for the preview. That's been running now, pushing a progress bar across and speeding up my cooling fans, for about 5 minutes. It's only a small percentage of the way through.

For graphics tasks specifically suited to GPU operations, yes, GPUs can be hugely faster than CPUs. But let me tell you, it weren't no easy job to make it work like this. My version 1 of the plug-in did everything in the CPU, and it became clear that while it was acceptable when a fairly small number of effects were generated, people would expect more. The task of porting the plug-in to use OpenGL / shaders / etc. was literally about 1.5 man-years, just to make it do the same things it did before, but GPU-accelerated. Part of that was development of a new framework which can be re-used, but part of it was simply reorganizing the task to be GPU-compatible, which would need to be repeated for another GPU-based development.

-Noel

Copy link to clipboard

Copied

Hi Noel

Noel Carboni wrote:

Sorry I used the word panacea. No accusations were intended on my part.

There was nothing offensive about your post, and your use of the word panacea didn't bother me. The repeated misrepresentation of my statements by an Adobe employee was irritating.

It occurs to me that if Photoshop were designed from the ground up today things would likely be done differently than they are (and which probably can't be practically changed without doing that ground up rewrite).

That's the situation that I see and there seems next to no probability in the near future of Adobe commiting the vast resources that would be required to develop a fully modern equivalent to Photoshop.

An anecdote: I was just doing some testing on one of my plug-ins with a preset that maxes out all controls to do a stress test. This embodies a large number of operations multiplied by a huge number of pixels, just as I mentioned above.

The GPU displayed the preview with the (tens of thousands of) effects the plug-in generated rendered on top of the original image in just a few seconds. The screen was basically covered. Not interactive, but not unusably slow.

When I hit [OK] to commit the effects back to the image, the plug-in switches to using the CPUs (all cores simultaneously, of which I have 8) to render the effects, since they're done in a bit higher accuracy than what's done for the preview. That's been running now, pushing a progress bar across and speeding up my cooling fans, for about 5 minutes. It's only a small percentage of the way through.

For graphics tasks specifically suited to GPU operations, yes, GPUs can be hugely faster than CPUs. But let me tell you, it weren't no easy job to make it work like this. My version 1 of the plug-in did everything in the CPU, and it became clear that while it was acceptable when a fairly small number of effects were generated, people would expect more. The task of porting the plug-in to use OpenGL / shaders / etc. was literally about 1.5 man-years, just to make it do the same things it did before, but GPU-accelerated. Part of that was development of a new framework which can be re-used, but part of it was simply reorganizing the task to be GPU-compatible, which would need to be repeated for another GPU-based development.

-Noel

Thanks for the informing account of the difficulties that can be encountered when developing GPU-accelerated software.

Copy link to clipboard

Copied

This seems rather simple, unless I missed something so I'm not sure why people are saying it can't be done. Make a cool looking frosted glass effect with methods others mentioned above but make sure the background is transparent and the layer's white areas are blank, not actually white. Then, save it as a PNG-24 file with transparency. Those can handle variable transparency so for any future projects you want to overlay it on, just open that PNG file, select all, copy, and paste it overtop the other file as the top layer. If you can't end up with anything other than an image with a bunch of white instead of mostly transparent, you can just do everything I said previously but then change the layer blending method to overlay or possibly others.

Copy link to clipboard

Copied

This seems rather simple, unless I missed something so I'm not sure why people are saying it can't be done. Make a cool looking frosted glass effect with methods others mentioned above but make sure the background is transparent and the layer's white areas are blank, not actually white.

I seem to expect something different than you from a »frosted glass«-effect, namely it affecting the underlying image in more ways than brightness, color or blending with other pixel content.

But as the OP has not provided an example of what they expect your approach might fit their expectations after all.

-

- 1

- 2