- Home

- Photoshop ecosystem

- Discussions

- Using 16-bits per channel when editing old JPEGs

- Using 16-bits per channel when editing old JPEGs

Copy link to clipboard

Copied

I have some old JPEG photos that were shoot about 15 years ago on a consumer-level Canon, and I'm going to retouch them heavily, then save back into JPEG, and as a final step to share them on Web. They won't be printed.

Does it mean that while I edit them in Photoshop, it will be better to switch to 16 bits per channel (given that I will use the sRGB color profile, which is quite restrictive), so that 16 bits per channel will help me to avoid appearing different kinds of visual artifacts? (The artifacts like "banding"; the artifacts that appear when you lose some of your colors due to heavily adjustments of hue, saturatation, curves, whatever.)

8-bit JPEGs → Adjusting them in Photoshop in 16-bit sRGB → Saving them back to 8-bit JPEGs. Is it a good workflow?

[Typo in subject fixed by moderator as per OP's following post]

1 Correct answer

1 Correct answer

As others have said, switching an 8 bits/channel file to 16 bits/channel does not gain anything - but neither does it lose anything. Those 256 levels in the original file are just slotted in to 256 distinct levels from the 65,536 available in a 16-bit/channel file. (Actually in Photoshop 16 bit there are 32,769 levels but that doesn't matter here).

However, when you then go on to make adjustments then 16bits/channel does have an advantage. In 8 bits/channel each adjustment calculates a new lev

...Explore related tutorials & articles

Copy link to clipboard

Copied

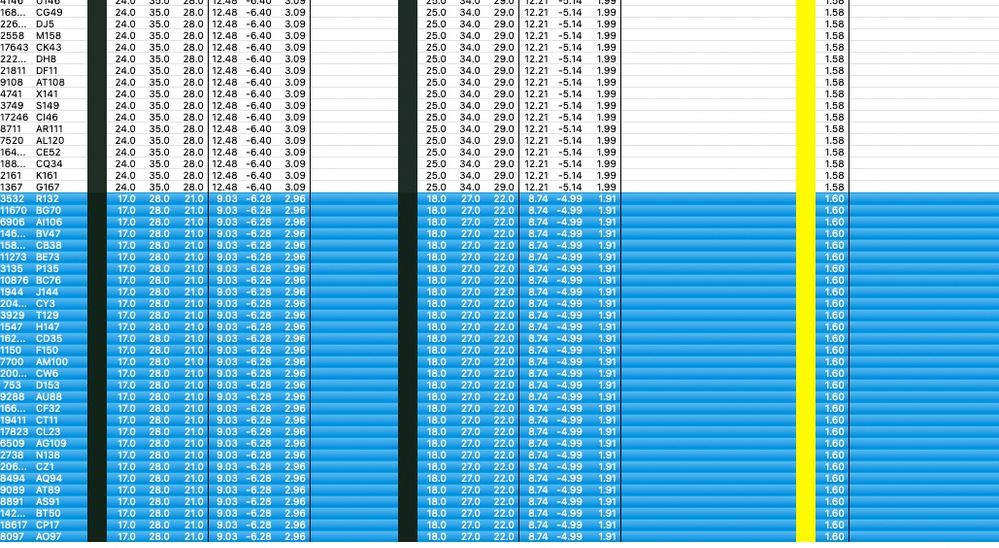

I don't understand how you get these results, or what you're testing.

Are you saying you do not get these histograms you see here?

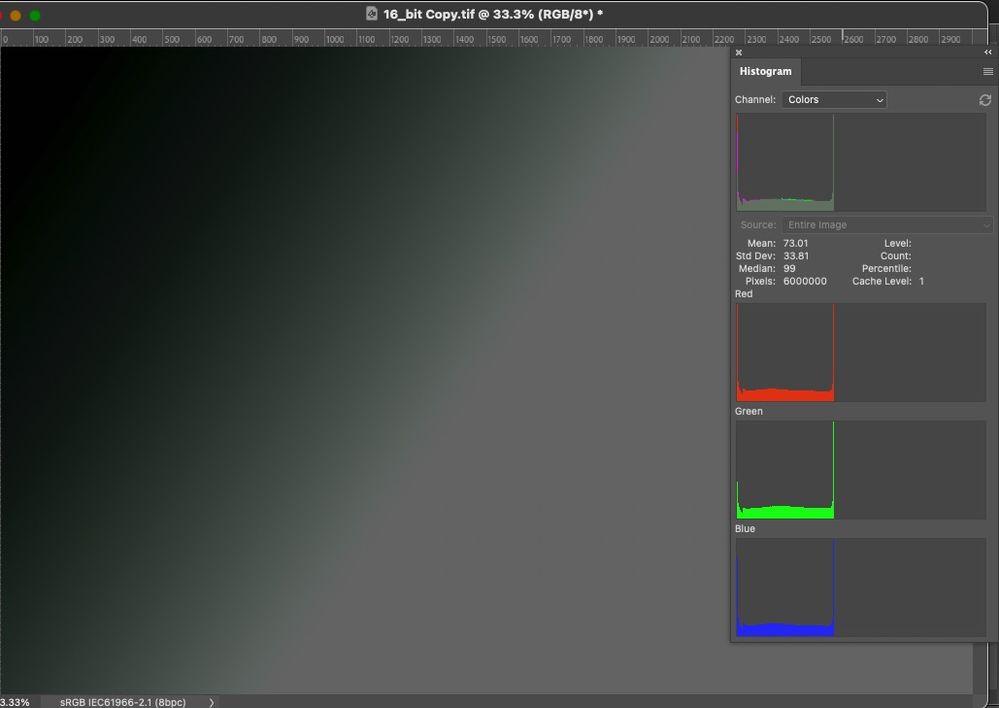

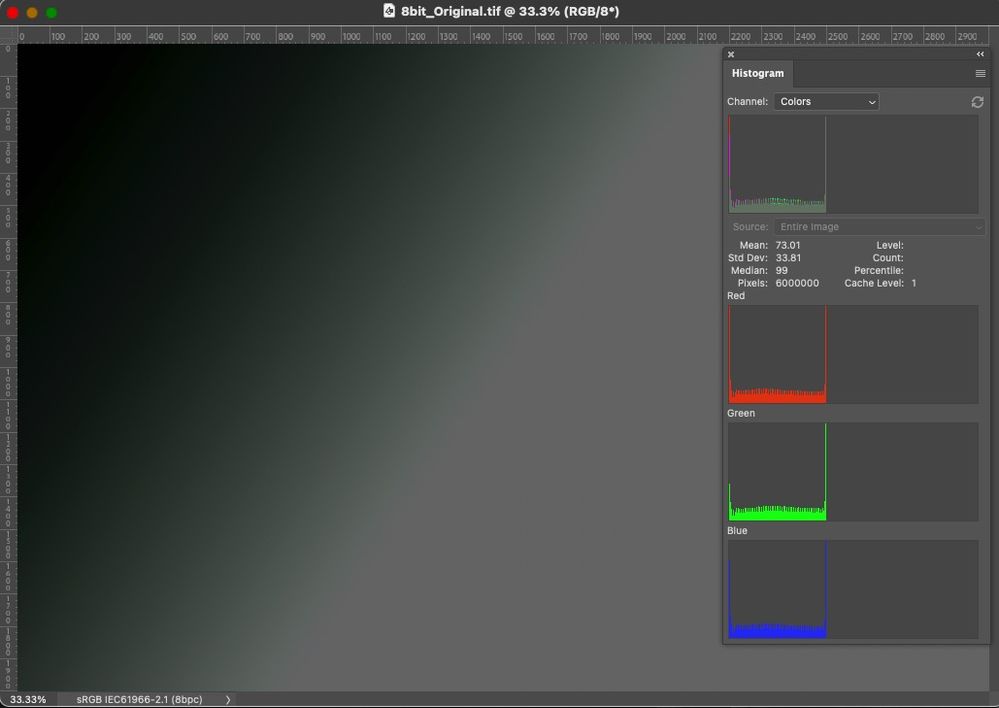

This is the result of adjusting an 8 bit file, vs. converting to 16 and doing the same adjustment, then converting back to 8. Note that these are refreshed histograms, not cached.

Incidentally, this very same procedure is how an NEC Spectraview or Eizo ColorEdge works. The 8 or 10 bit signal from the video card is converted to 16 bits, run through the calibration tables, then converted back to 10 bits and sent to the panel.

Copy link to clipboard

Copied

I don't understand how you get these results, or what you're testing.

I outlined what I've been testing and comparing the data after edits and they are identical.

I've built Dave's images and edited them as described.

I subtract them, there is no difference. The Histograms are identical.

There is no difference on my end, editing on 8-bit per color data OR on the 8-bit per color converted to 16-bit per color data.

But AGAIN, there will be a massive difference in the two IF Dither is on. I do not have that set to be on for a fair test. I've asked, and will ask again, are you guys doing all this with Dither on or off?

Copy link to clipboard

Copied

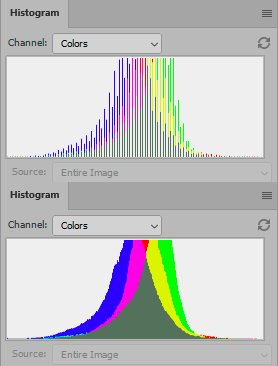

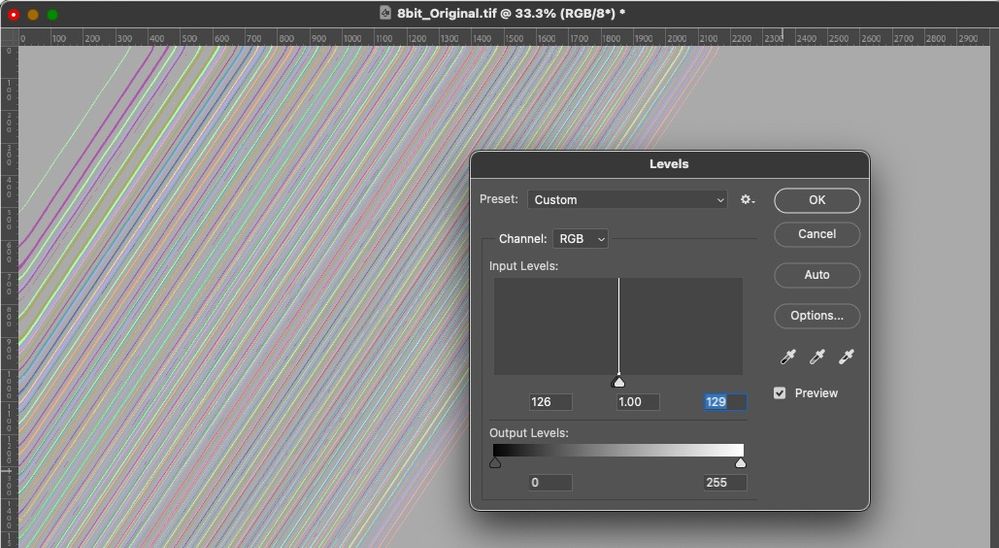

I turned off dither and I still get these refreshed histograms:

top 8 bit adjustment

bottom 8 bit > 16 bit adjustment > 8 bit

Copy link to clipboard

Copied

I turned off dither and I still get these refreshed histograms:

From what, doing what?

Again, here's Dave's tests and the Histograms, and also, it's all in my Public Dropbox.

And again, there is no difference in the two tests on this end. In any of the kinds of differing analysis, I've tried. But since Histograms seem to be part of this, here you go:

Do I see slight differences in the Histogram, yes but nothing like yours and there is still a cache level that can't be avoided. Note indeed, the edited 16-bit document WAS converted back to 8-bits above and has to be done for the calculations. But that's exactly what the OP will end up doing.

Do I get a dE of 1 or less with the resulting 6000 pixels, does subtracting the two produce a nearly zero difference? Yes.

The differences compared are invisible! On a synthetic gradient with some pretty severe editing.

As yet, I see zero reasons to take the time to convert 8-bit per color data to 16-bits but if my testing is flawed, I'd like to know where.

Copy link to clipboard

Copied

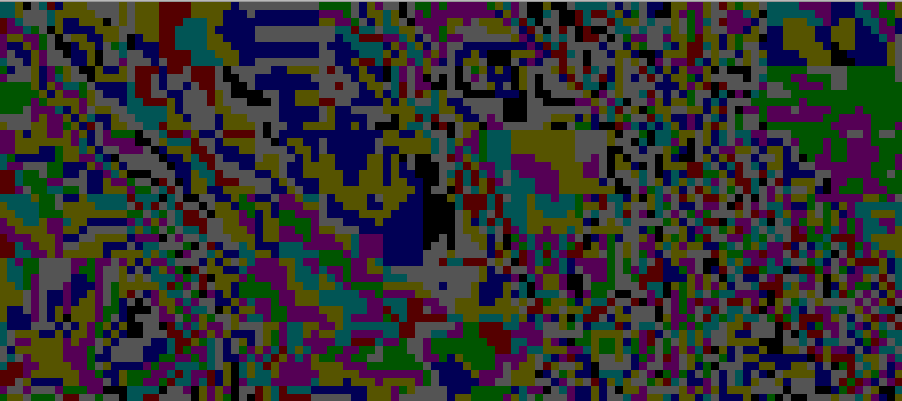

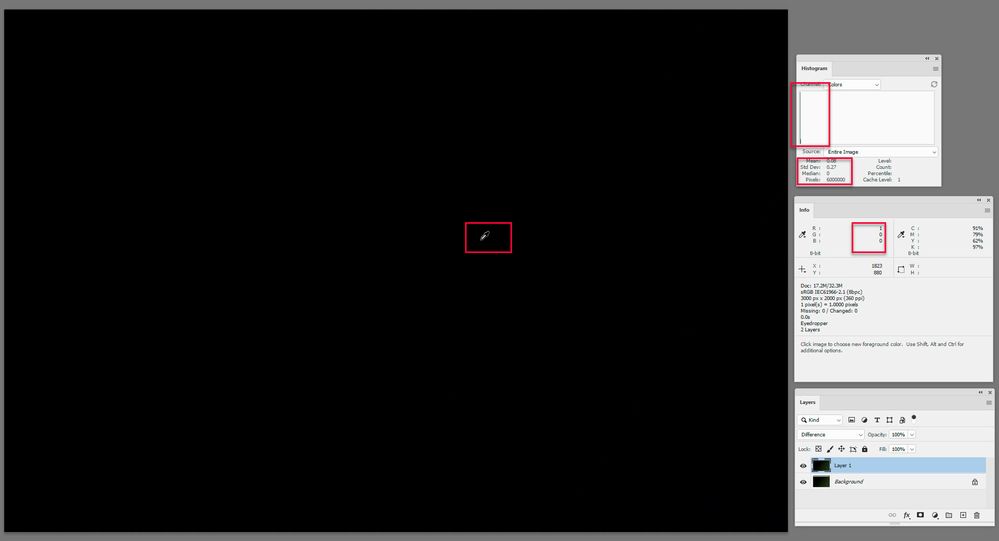

I've used a real photograph, not a test file. Maybe that's why.

If I overlay the two in Difference blend mode, and exaggerate to make it visible, it looks like this (zoomed in to pixels):

In any case, all these discussions tend to boil down to can you see it. Well, maybe not - but IMO that's not the point. There's a cumulative effect to all this, and you never know when you will cross the threshold to where it does become visible.

In other words - I'm not interested in whether I can see it. I'm interested in whether it's there at all. If it is, I don't want it 😉

Copy link to clipboard

Copied

I've used a real photograph, not a test file. Maybe that's why.

Dave's test is a torture test due to the edits and that they are applied on Gradients. So the results should be even worse than whatever you did. I suggest you try his step by step and see what you get.

In other words - I'm not interested in whether I can see it. I'm interested in whether it's there at all. If it is, I don't want it.

Based on what I've tried on Dave's test outline, it can't be seen. I mean, these low differences numeric values, it could be due to anything from multiple steps in Photoshop, look at these highest of all dE values of 6000 device values out of the gradient:

Copy link to clipboard

Copied

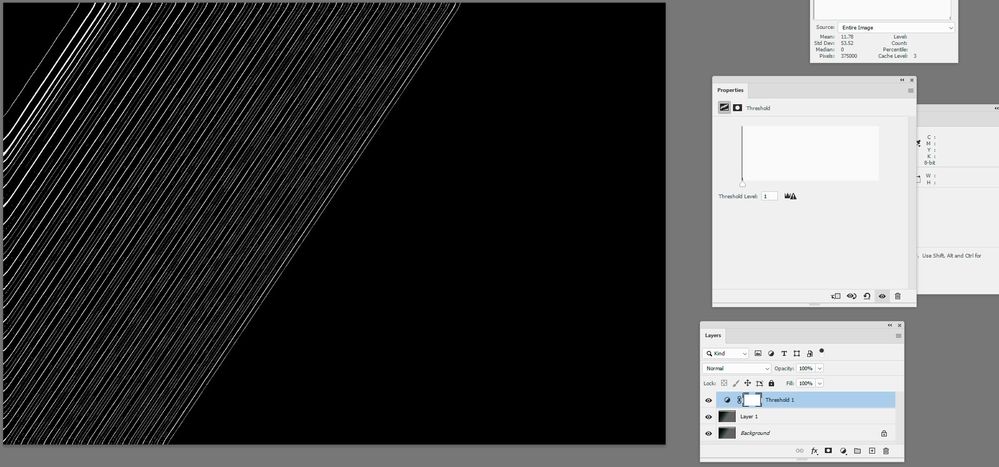

I'm still not on board.

Here I did the same adjustments as on the photograph above, but on a shallow gradient:

I cannot possibly accept that there is no benefit to converting to 16 bits for adjustments, then converting back to 8.

Again, this is the whole principle for high bit internal LUTs in NEC Spectraviews and Eizo Coloredges.

Copy link to clipboard

Copied

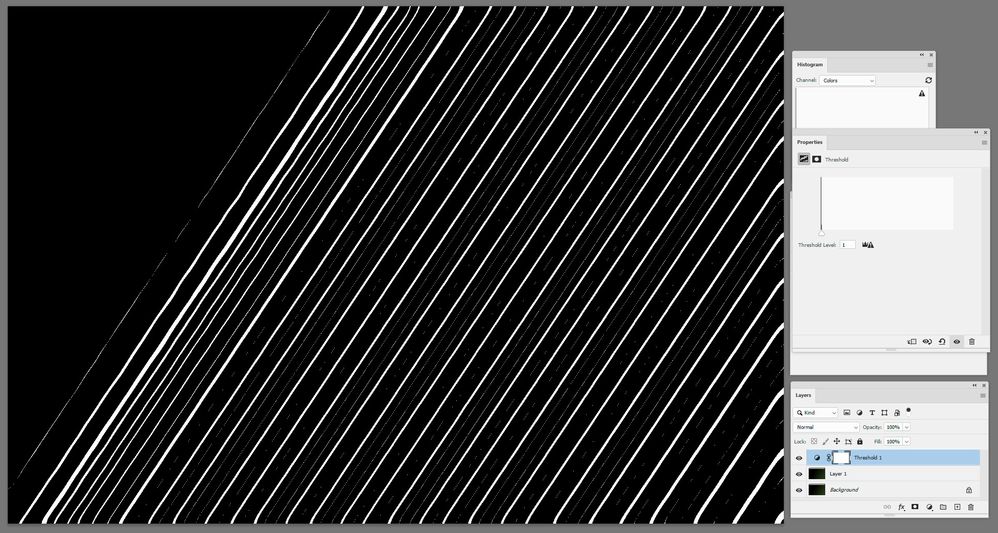

Hi

An update now that I am back in my office. First I checked dither. I do have dither on for the gradient but that is irrelevant as the duplication of the image was after the gradient was applied, so both starting images are identical. However I also checked Color Settings and "Use dither for 8 bit" was checked so I unchecked it and repeated the test. I still get a difference:

My steps (I've clarified slightly but not changed them)

1. Create document 3000px x 2000px 8 bit sRGB

2. Use gradient tool to create a gradient from top left pixel RGB 0,0,0 to bottom right RGB 40,60,25

3. Duplicate the image (using Image duplicate)

4. Convert the duplicate to 16 bit using Image Mode

5. In both documents :

Image > Adustments > Exposure +3.0

Filter > Blur > Gaussian Blur 20px

Image > Adjustments > Exposure -3.0

6. Convert the 16 bit document back to 8 bit using Image Mode

7. Drag the duplicate (now 8 bit) back over the original as a layer, using shift key to align perfectly and set blending mode to difference

I then added one more step

8. Add a threshold adjustment layer to highlight where those difference are

I agree the differences are small but that is with only three simple changes.

I'll add the two files shortly. If I've missed somthing please let me know.

Dave

Copy link to clipboard

Copied

Copy link to clipboard

Copied

I do have dither on for the gradient but that is irrelevant as the duplication of the image was after the gradient was applied, so both starting images are identical.

The check box Use Dither (8-bit/Channel Images) is used when converting 8-bit per color images.

Remember your step 4:

4. Convert the duplicate to 16 bit using Image Mode

Gotta be off.

I still get a difference:

I've supplied your test image first mentioned in Dropbox. The differences are numerically invisible. It's easy to examine them and comment and it's easy to subtract the two; do you see the Levels results I provided? Do you get the same results with your images, or mine, and if not, what's the difference in how they were created?

Copy link to clipboard

Copied

Completely moot in this discussion!

By @TheDigitalDog

Not moot at all. It's exactly the same principle, used for exactly the same purpose. Start with 8 bit, convert to 16 bit, perform adjustment (calibration tables), convert back to 8 bit, output.

Copy link to clipboard

Copied

@D Fosse wrote:

Completely moot in this discussion!

By @TheDigitalDog

Not moot at all. It's exactly the same principle, used for exactly the same purpose. Start with 8 bit, convert to 16 bit, perform adjustment (calibration tables), convert back to 8 bit, output.

Fine, the results then are as we (some of us) see here, invisible. And at least on this end, further, my entire video path IS high bit. But that's a different topic.

Copy link to clipboard

Copied

I've just downloaded the two images that Andrew posted to drop box. They show the same results as mine did. Every pixel is not identical using the 8 bit throughout and the 8 bit to 16 bit, adjust then convert back to 8 bit method.

I agree that, in this test, the resulting differences are small, and with low dE unlikely to be visible to the eye. However the nature of introducing rounding errors on each adjustment is that they are cumulative and therefore the more adjustments the more the likelihood of the cumulative error reaching a point where it is visible. What it takes to reach that depends on both the image and the actual adjustments undertaken.

I therefore stand by my original premise that there is an advantage in carrying out adjustments in 16 bit even when starting with an 8 bit image.

As an aside, I carry out almost all my adjustments in a 16 bit/channel chain throughout, with the exception of some 32 bit linear work for 3D rendering - but that use of 32 bit is for a different reason entirely (HDR). I keep 8 bit for exports only. Like Andrew and Dag, my displays are both 10bit/channel (Eizo).

Dave

-

- 1

- 2

Find more inspiration, events, and resources on the new Adobe Community

Explore Now