AI prompt words inappropriately marked as violations

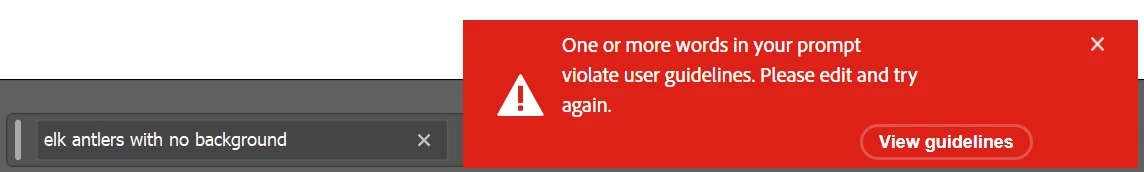

I have had this happen to me twice with completely benign words in my prompt. Several months ago, I had the word "lasso" in the prompt and kept getting the message that the prompt violated guidelines. I changed "lasso" to "lariat" and all was good. I'm assuming the middle three letters of "lasso" was the violation. At least, that's the only thing I can think of. HOWEVER, I have no idea what might be in appropriate in "elk antlers with no background". I started with "horns" and changed it to "antlers" thinking there might be some way to construe "horns" as dirty, but there was no change. "Antlers" is just as bad, apparently. It works with deer, reindeer, moose...but not elk.

I do understand having guidelines, but I think they need to be reviewed and maybe softened a little. Other services have not given me that issue and I don't have to pay for them.