Lightroom Classic Using Incorrect GPU

I am on a 2019 Mac Pro. I have 3 graphics cards installed:

- 1 AMD Radeon PRO W6600

- 2 AMD Radeon RX 6900 XTs.

The 6600 is used for my monitor, the 6900s are used for their processing power (3D rendering, etc.). My understanding is that Lightroom Classic can only use the graphics card that the monitor is plugged into. That being the case, I switch my monitor over to a 6900 when I work in Lightroom, so that I can take advantage of the superior horsepower for things like AI settings.

However, when I plug my monitor into either 6900, Lightroom ignores it and continues processing on the 6600. This is true even after restarting Lightroom. It will only process on the 6900s if I remove my 6600 from the machine entirely.

The 6900s are significantly more powerful and process AI settings in half the time, but my GPU setup works very well for the rest of my workflow, so removing the 6600 permanently is not an option.

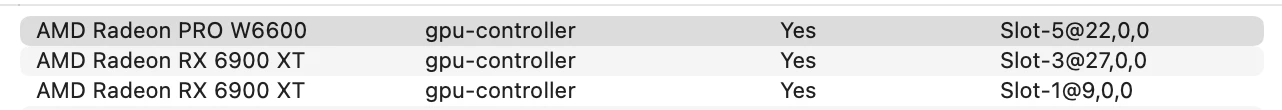

This is the latest version of Lightroom Classic and macOS 15.5. Here is the arrangement in the Mac Pro:

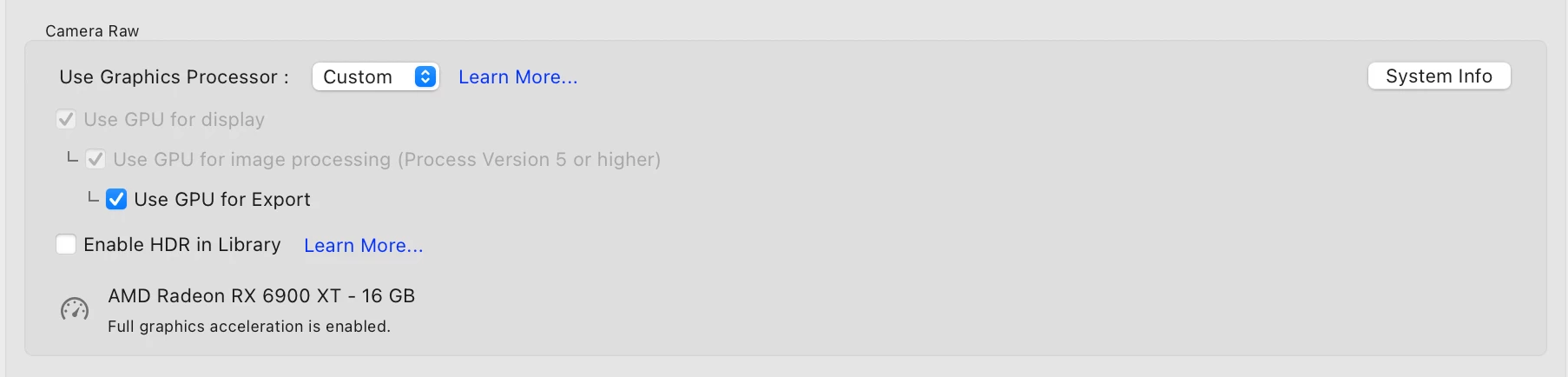

When I am plugged into the 6900, it displays correctly in Lightroom, but Lightroom fails to use it:

Any help would be appreciated. Thanks!