- Home

- Photoshop ecosystem

- Discussions

- Re: Using 16-bits per channel when editing old JPE...

- Re: Using 16-bits per channel when editing old JPE...

Copy link to clipboard

Copied

I have some old JPEG photos that were shoot about 15 years ago on a consumer-level Canon, and I'm going to retouch them heavily, then save back into JPEG, and as a final step to share them on Web. They won't be printed.

Does it mean that while I edit them in Photoshop, it will be better to switch to 16 bits per channel (given that I will use the sRGB color profile, which is quite restrictive), so that 16 bits per channel will help me to avoid appearing different kinds of visual artifacts? (The artifacts like "banding"; the artifacts that appear when you lose some of your colors due to heavily adjustments of hue, saturatation, curves, whatever.)

8-bit JPEGs → Adjusting them in Photoshop in 16-bit sRGB → Saving them back to 8-bit JPEGs. Is it a good workflow?

[Typo in subject fixed by moderator as per OP's following post]

1 Correct answer

1 Correct answer

As others have said, switching an 8 bits/channel file to 16 bits/channel does not gain anything - but neither does it lose anything. Those 256 levels in the original file are just slotted in to 256 distinct levels from the 65,536 available in a 16-bit/channel file. (Actually in Photoshop 16 bit there are 32,769 levels but that doesn't matter here).

However, when you then go on to make adjustments then 16bits/channel does have an advantage. In 8 bits/channel each adjustment calculates a new lev

...Explore related tutorials & articles

Copy link to clipboard

Copied

Sorry for a type in the title.

Copy link to clipboard

Copied

* typo

Copy link to clipboard

Copied

It's not 16-bit (high bit) from the get-go, converting to that isn't going to buy you anything. And saving back as JPEG is going to cause even more data loss. IOW, converting to high bit doesn't magically give you more data.

I'd probably consider doing as much of the editing on layers or in something like Lightroom where you're doing parametric editing but when the rubber meets the road when the edits are applied to the original data, there is going to be data loss.

Copy link to clipboard

Copied

"Сonverting to that isn't going to buy you anything." - Yes, I do understand that opening an old JPEG in 16 bit won't somehow magically make it look better. What I mean is that it editing it in 16 bit may provide me more "room" to make different errors. Those errors will cause less damage. Or this isn't how things really work?

Copy link to clipboard

Copied

Maybe this will help:

http://digitaldog.net/files/TheHighBitdepthDebate.pdf

You are not making more data or really even numeric padding.

Copy link to clipboard

Copied

"...or really even numeric padding."

You mean the "room" that makes my errors less destructive, right? I won't get such room?

The article is interesting, thanks for sharing it, but ir requires a bit higher level of understanding that I currently have.

Copy link to clipboard

Copied

I agree that converting to 16 bit will not assist final image quality.

neilB

Copy link to clipboard

Copied

It will when you do further adjustments. It has been unambiguously demonstrated in this thread. IMO it's not even necessary to demonstrate - it can be deduced a priori.

Copy link to clipboard

Copied

@D Fosse wrote:

It will when you do further adjustments. It has been unambiguously demonstrated in this thread. IMO it's not even necessary to demonstrate - it can be deduced a priori.

"Tell people that there’s an invisible man in the sky who created the universe, and the vast majority will believe you. Tell them the paint is wet, and they have to touch it to be sure." - George Carlin

Copy link to clipboard

Copied

In case you aren't already, be sure to save your photos as Photoshop files and work non-destructively...

- Perform retouching on separate layers

- Make tone and color changes using separate adjustment layers

- etc...

Then save out JPEGs.

Copy link to clipboard

Copied

Layers yes. But at some point, that gets flattened and the “non destructive to data” part ends. Unless you only live in Photoshop, the edits are applied. To print. To create/post even as a non layered TIFF. And more so to a JPEG.

Nondestructive while editing, yes. After? No. At least doing all this high bit, the eventual data loss is invisible. Often not possible on 8-bits per color images.

Copy link to clipboard

Copied

Agreed. My comment was referring to editing in general. I was recommending against the direct manipulation and saving of JPEGs.

Copy link to clipboard

Copied

It really depends on what you want to do. By all means, toggling to 16-bit will give you much more headroom to do new additions, effects, gradations, etc, then export a final new render in 8-bit. It won't help you with the existing 8-bit elements, of course, but if you have a working file in 16-bit, you can do much more extreme levels and curves adjustments on the new 16-bit elements. This is totally acceptable to me as a workflow if that's all you had as source material.

Copy link to clipboard

Copied

It won't help you with the existing 8-bit elements, of course, but if you have a working file in 16-bit, you can do much more extreme levels and curves adjustments on the new 16-bit elements.

But to make different extreme adjustments to 8-bit elements, switching Photoshop to 16 bit per channel won't give me any benefit?

Copy link to clipboard

Copied

If a jpeg is all you have, switching to 16 bit lets you make edits to it without any further damage.

And that's the whole point, right? You can't do anything with the existing image quality, but you can prevent further loss. So yes, it makes perfect sense. Convert to 16 bits and resave immediately to PSD or TIFF.

Also note that there is a new neural filter that specifically removes jpeg artifacts. I tested it out of curiosity the other day, and it seems remarkably effective. So I'd run that first. It can sometimes remove a tiny bit of genuine detail, so check visually and don't throw away the original.

Copy link to clipboard

Copied

As others have said, switching an 8 bits/channel file to 16 bits/channel does not gain anything - but neither does it lose anything. Those 256 levels in the original file are just slotted in to 256 distinct levels from the 65,536 available in a 16-bit/channel file. (Actually in Photoshop 16 bit there are 32,769 levels but that doesn't matter here).

However, when you then go on to make adjustments then 16bits/channel does have an advantage. In 8 bits/channel each adjustment calculates a new level and that is rounded into one of the 256 available levels. Then the next adjustment does the same and those rounding errors start to add up and you see stepping in the image (or combing in a histogram). By working in 16 bits/channel, values still are rounded but the number of levels means that the errors are much smaller and it takes an awful lot of cumulative adjustments before any become visible (if they ever become visible at all).

As an analogy. It's like having a ruler and marking points at 1,2,3, 4, 5 6 ....12 inches. Measuring those points with a second ruler accurate to 1/10 of a millimetre, does not make any difference to the position of those points.

However if you then make a series of calculations that will move those points and say each calculation has to be rounded so that the new position falls on a ruler point, using the 1 inch ruler will mean that after each calculation the result has to be slotted against distinct full inches. Whereas with the second ruler, all adjustments can be slotted into 1/10 of a millimetre steps. Which will be more accurate after several, even small, adjustments? At the end, the final result may be slotted back into the 12 inch ruler steps (the equivalent of exporting as an 8 bit file) but that final rounding done once is better than a series of roundings at each calculation.

Dave

Copy link to clipboard

Copied

However, when you then go on to make adjustments then 16bits/channel does have an advantage. In 8 bits/channel each adjustment calculates a new level and that is rounded into one of the 256 available levels.

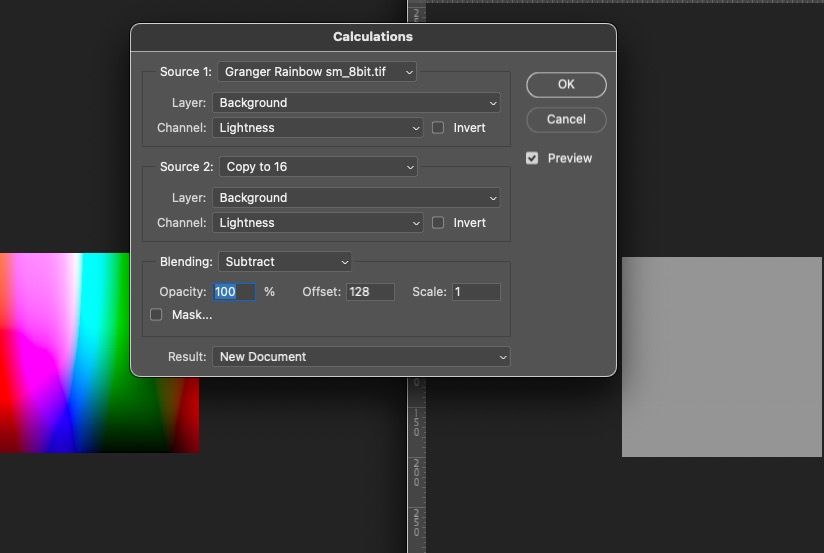

- I took a Granger Rainbow made in 16-bit.

- Converted it to 8-bit per color; our new test subject.

- I duplicated that to make a 2nd identical copy. Then converted it to 16-bit as the OP would with the JPEG.

- I apply Curves, a pretty radical adjustment of a curve, then using the option key, applied the same identical curve to the original (8-bit per color) copy.

- I have two identical images with identical adjustments, the only difference is bit depth.

- I convert the 16-bit back to 8-bit, again, as the OP will. Dither IS off as it should be.

- I use Calculations and Subtract one from the other: the results are, that the two are FAPP identical. There is zero difference between the two. See below.

Conclusion: converting 8-bit data to 16-bit data does nothing useful. In fact no proof it does anything but I await proof of concept.

I'd be happy to upload to Dropbox the Granger Rainbow test image but you can try this with just about anything.

Copy link to clipboard

Copied

So in a similar test:

1. Create document 3000px x 2000px 8 bit sRGB

2. Use gradient tool to create a gradient from top left pixel RGB 0,0,0 to bottom right RGB 40,60,25

3. Duplicate the image

4. Convert the duplicate to 16 bit using Image Mode

5. In both documents :

Image > Adustments > Exposure +3.0

Gaussian Blur 20px

Image > Adjustments > Exposure -3.0

6. Convert the 16 bit document back to 8 bit using Image Mode

7. Drag the duplicate (now 8 bit) back over the original as a layer, using guides to ensure positioning is identical and set blending mode to difference

Use the eydropper and info panel. If identical, every pixel will be RGB 0,0,0 - They are not

Dave

Copy link to clipboard

Copied

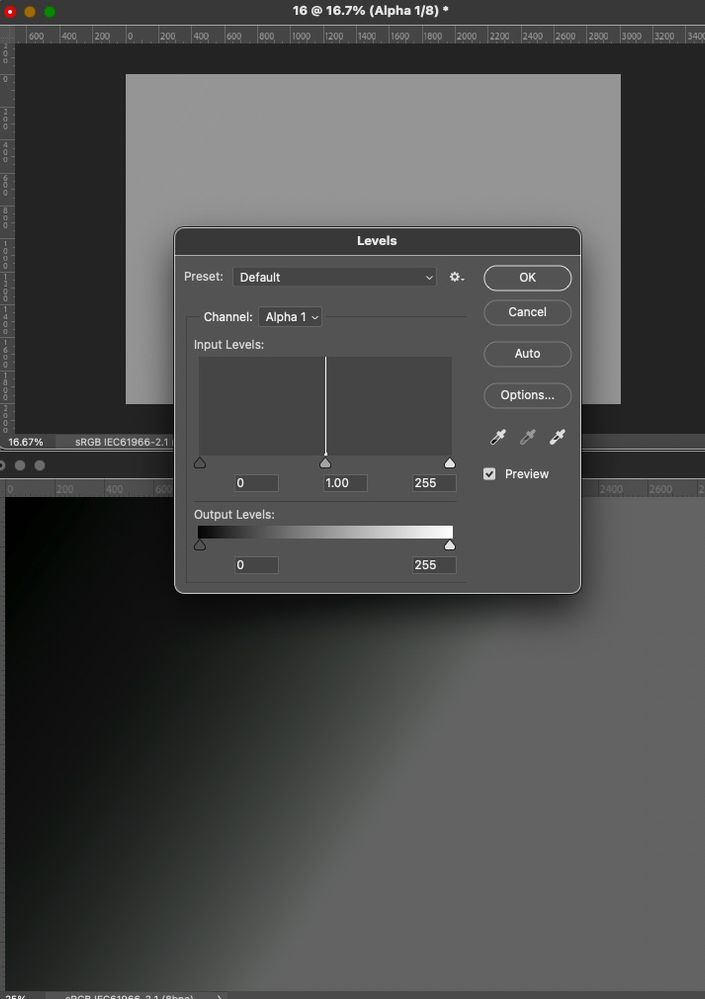

Did the following:

Create document 3000px x 2000px 8 bit sRGB

2. Use gradient tool to create a gradient from top left pixel RGB 0,0,0 to bottom right RGB 40,60,25

3. Duplicate the image

4. Convert the duplicate to 16 bit using Image Mode

5. In both documents :

Image > Adustments > Exposure +3.0

Gaussian Blur 20px

Image > Adjustments > Exposure -3.0

6. Convert the 16 bit document back to 8 bit using Image Mode (DITHER HAS TO BE OFF FOR A FAIR TEST)

Now I stop, not going to use Eyedropper, my way of viewing differences is more robust.

Subtract one from the other, again: FAPP no difference:

If Dither is off as it should be and you subtract from the two tests, what do you see as shown above from the Histogram of the results???

Copy link to clipboard

Copied

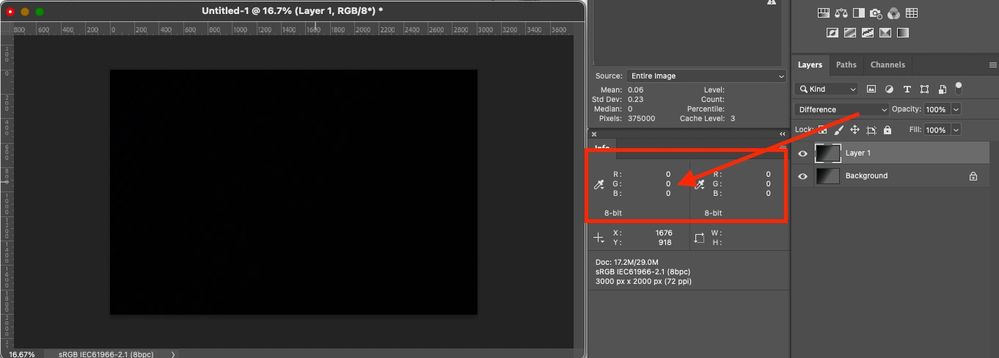

Drag the duplicate (now 8 bit) back over the original as a layer, using guides to ensure positioning is identical and set blending mode to difference

Use the eydropper and info panel. If identical, every pixel will be RGB 0,0,0 - They are not

Drag and drop layer using Shift Key for registration. They are identical here over the entire area, Sampling set to 11x11 pixels:

Copy link to clipboard

Copied

I used sampling 1 pixel and they were not. I'll repeat it when I get back to the office though just to ensure no mistakes.

Dave

Copy link to clipboard

Copied

The sampling is moot Dave. I get both 0/0/0 at any sampling and more importantly, a result from calculations whereby the entire image is black (gray with my settings), no differences. Why is that?

Again, do you have dither on? That would totally invalidate the test. You can't add noise to one image and expect it to match the other.

Why don't you upload both your finished test doc's to something like Dropbox so others can see exactly what's going on.

Copy link to clipboard

Copied

By working in 16 bits/channel, values still are rounded but the number of levels means that the errors are much smaller and it takes an awful lot of cumulative adjustments before any become visible (if they ever become visible at all).

At least in your tourture test on a synthetic gradient, about the worst kind of data to test this on, the edits on the 8-bit per color image and the results on the 16-bit document are indeed, invisible.

So, convert JPEGs to 16-bits to edit? If it makes you feel better, if you charge by the hour, sure.

The same as doing the edits on actual high bit data? Nope. Not unless both our tests as just shown are invalid.

I don't think so, but I have an open mind.

Copy link to clipboard

Copied

Did your test again Dave, from scratch, same results. Subract, Calcluations, everything still shows the same results as I saw with the Granger Rainbow.

Saved TIFFs are here if you wish to look at them:

https://www.dropbox.com/sh/fqo8gpaeujz1zfw/AABC6dhX9aV11XjI35kwCjs0a?dl=0

I also converted the results of your tests, two TIFFs to device values in ColorThink Pro, even allowing one to be in 16-bit and the other 8-bit (not converting the higher bit to 8-bit). The differences in images between the two are invisible! We'd need to see a dE above 1 and change and that's not happening, even comparing the differing bit depths after those edits. CTP has x.xx precision here.

This is a 6000 pixel analysis (using the full sized image would choke CTP). I used Nearest Neighboor to resample 3kx2K to 300x200 pixels.

--------------------------------------------------

Overall - (60000 colors)

--------------------------------------------------

Average dE: 0.18

Max dE: 1.60

Min dE: 0.00

StdDev dE: 0.32

Best 90% - (53999 colors)

--------------------------------------------------

Average dE: 0.10

Max dE: 0.70

Min dE: 0.00

StdDev dE: 0.21

Worst 10% - (6001 colors)

--------------------------------------------------

Average dE: 0.92

Max dE: 1.60

Min dE: 0.70

StdDev dE: 0.21

--------------------------------------------------

Again, based on my testing and colorimetric values, I see zero reason to convert 8-bit per color data to 16-bit, even using your admittedly good torture test on those gradients. On an actual photo? Even more invisible.

If I'm doing something wrong in creating your tests or in the analysis of the data, please let me know.

-

- 1

- 2

Find more inspiration, events, and resources on the new Adobe Community

Explore Now