Color profile question - HLG color profiles

Hi

I have an issue that drives me bonkers.

I have recorded some video footage with my DJI Mavic 3 Cine drone. I've imported these clips into Premiere Pro. To test - and to make sure I knew the baseline of the colors BEFORE I did any color corrections - I always export a tiny clip for reference, to see if what I'm seeing the the Premiere Pro project is also the the same that I export. But here's the thing that drives me crazy:

- The original and the exported clip have the same colors - that's great

- The media I can see within Premiere Pro appears to have lost a bit vibrance

THIS MAKES COLOR GRADING IMPOSSIBLE! I need to know exactly what I can expect once I export the media.

So to follow how the media was born, here are the specs for the media for each step:

1. DJI video settings:

HLG, ProRes, 422 HQ, 5.1K (other settings usch as ISO etc. not important to the issue at hand)

Below is a sample of media

2. Premiere Pro project settings

- Color management: 100 (63% HLG, 51 PQ)

- 3D LUT Interpolation: Trilinear

3. Premiere Pro sequence settings

Working color space: Rec. 2100 HLG

Codec: Apple ProRes 422 HQ

Working color space: Rec. 2100 HLG (1920 x 1080 in order to zoom via "set to framesize")

Below is a sample of media (colors have lost vibrance) - 1 image is used as they are identical on source and sequence preview pane

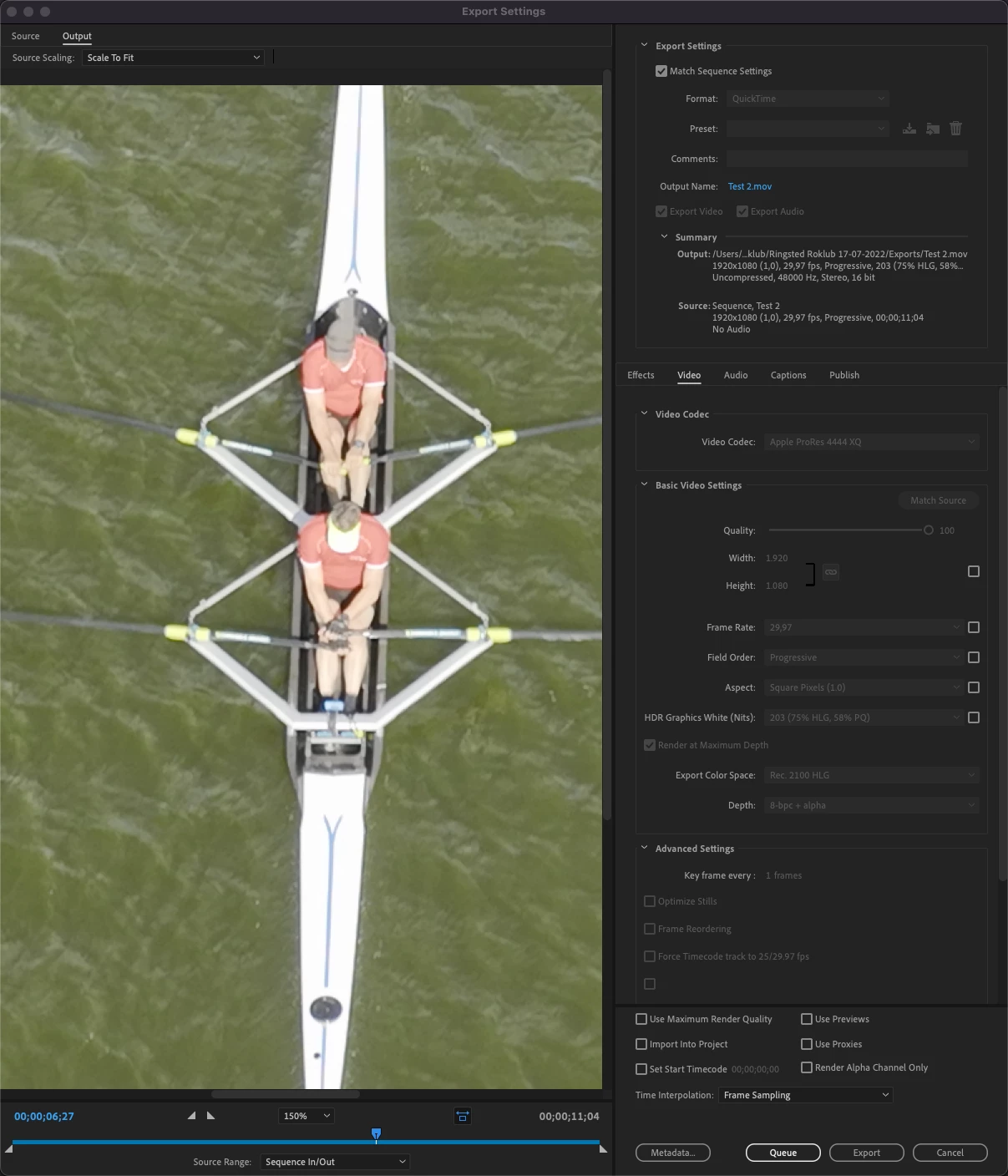

4. Premiere Pro - export settings (colors are still without vibrance):

Below is a sample of media once exported (now color vibrance is back on) - which is identical to the very first screendump.

What the heck am I doing wrong????

Basically I want the original footage to look the same inside Premiere Pro before I do any color grading, or else I can never trust any color grading if the Premiere Pro edit interface presents me with media where colors have lost their vibrance.

Any help is welcome.