Answered

This topic has been closed for replies.

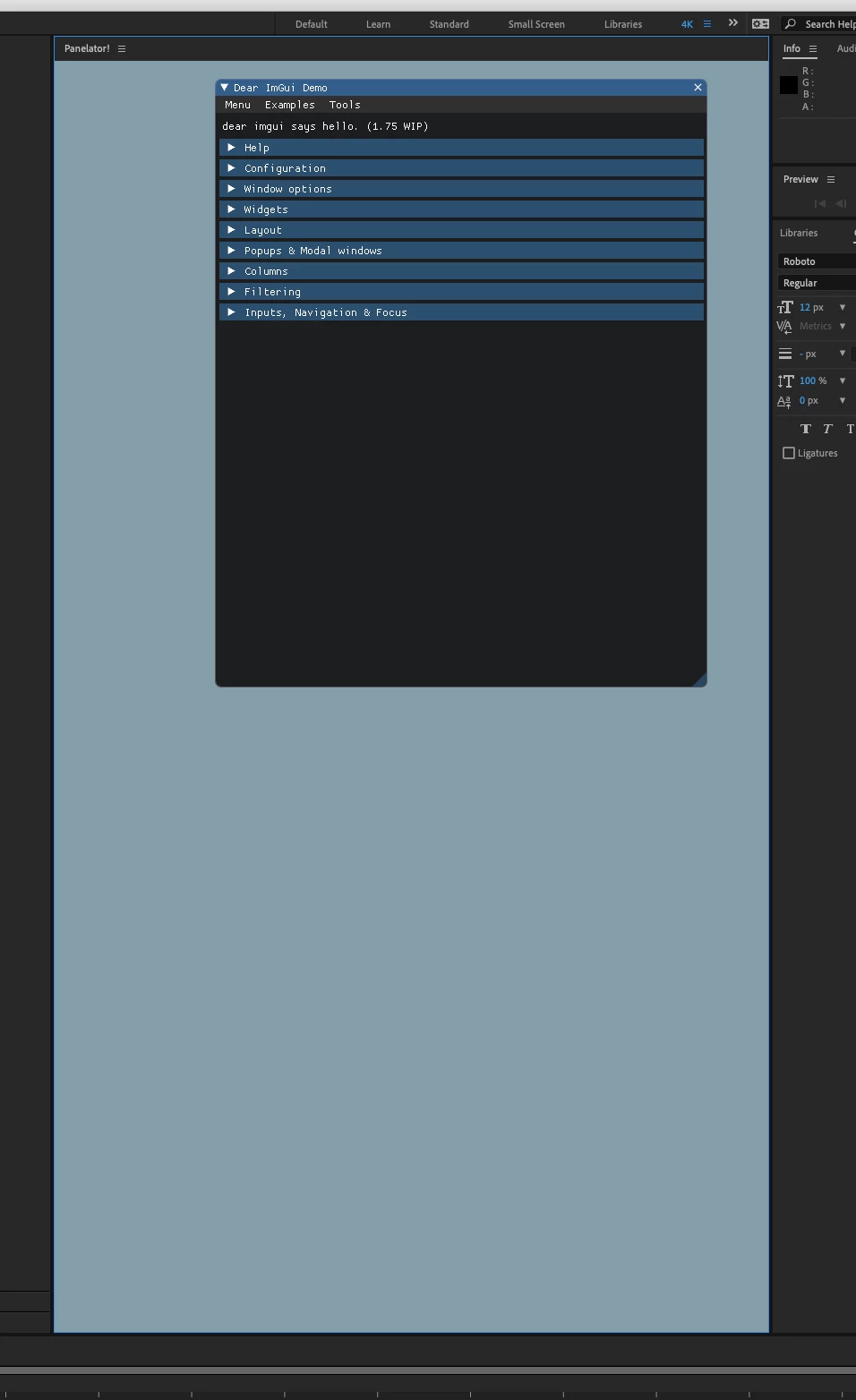

I have successfully rendered IMGUI inside the Panelator example.

The key was to create an opengl view as a child to the root NSView.

Then initialise IMGUI properly.

Sign up

Already have an account? Login

To post, reply, or follow discussions, please sign in with your Adobe ID.

Sign inSign in to Adobe Community

To post, reply, or follow discussions, please sign in with your Adobe ID.

Sign inEnter your E-mail address. We'll send you an e-mail with instructions to reset your password.